|

PLearn 0.1

|

|

PLearn 0.1

|

Filter between two linear layers of a 2D convolutional RBM. More...

#include <RBMConv2DLLParameters.h>

Public Member Functions | |

| RBMConv2DLLParameters (real the_learning_rate=0) | |

| Default constructor. | |

| RBMConv2DLLParameters (string down_types, string up_types, real the_learning_rate=0) | |

| Constructor from two string prototymes. | |

| virtual void | accumulatePosStats (const Vec &down_values, const Vec &up_values) |

| Accumulates positive phase statistics to *_pos_stats. | |

| virtual void | accumulateNegStats (const Vec &down_values, const Vec &up_values) |

| Accumulates negative phase statistics to *_neg_stats. | |

| virtual void | update () |

| Updates parameters according to contrastive divergence gradient. | |

| virtual void | update (const Vec &pos_down_values, const Vec &pos_up_values, const Vec &neg_down_values, const Vec &neg_up_values) |

| Updates parameters according to contrastive divergence gradient, not using the statistics but the explicit values passed. | |

| virtual void | clearStats () |

| Clear all information accumulated during stats. | |

| virtual void | computeUnitActivations (int start, int length, const Vec &activations) const |

| Computes the vectors of activation of "length" units, starting from "start", and concatenates them into "activations". | |

| virtual void | bpropUpdate (const Vec &input, const Vec &output, Vec &input_gradient, const Vec &output_gradient) |

| Adapt based on the output gradient: this method should only be called just after a corresponding fprop; it should be called with the same arguments as fprop for the first two arguments (and output should not have been modified since then). | |

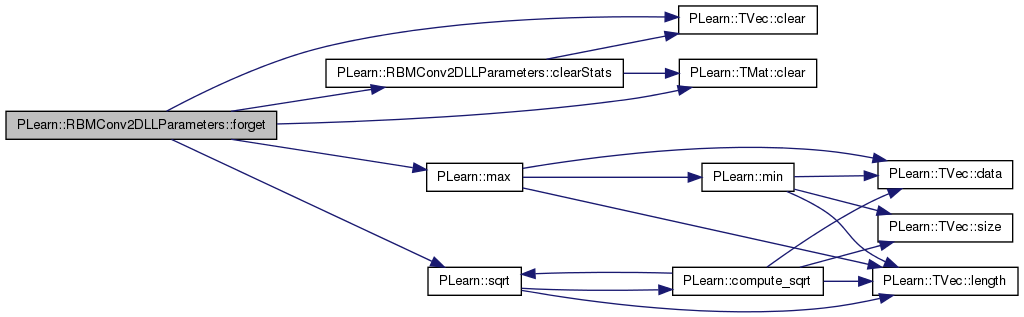

| virtual void | forget () |

| reset the parameters to the state they would be BEFORE starting training. | |

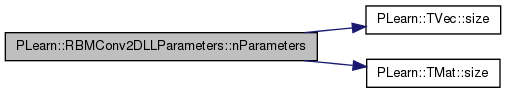

| virtual int | nParameters (bool share_up_params, bool share_down_params) const |

| optionally perform some processing after training, or after a series of fprop/bpropUpdate calls to prepare the model for truly out-of-sample operation. | |

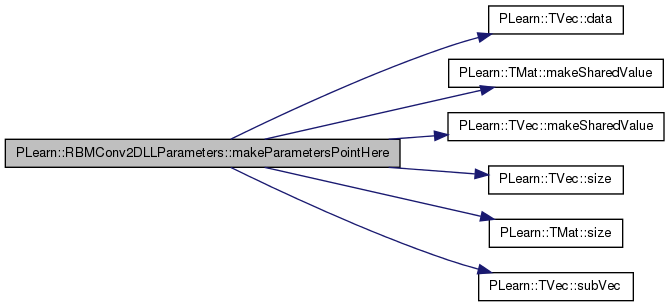

| virtual Vec | makeParametersPointHere (const Vec &global_parameters, bool share_up_params, bool share_down_params) |

| Make the parameters data be sub-vectors of the given global_parameters. | |

| virtual string | classname () const |

| virtual OptionList & | getOptionList () const |

| virtual OptionMap & | getOptionMap () const |

| virtual RemoteMethodMap & | getRemoteMethodMap () const |

| virtual RBMConv2DLLParameters * | deepCopy (CopiesMap &copies) const |

| virtual void | build () |

| Post-constructor. | |

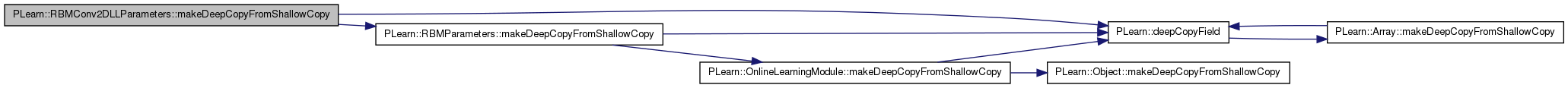

| virtual void | makeDeepCopyFromShallowCopy (CopiesMap &copies) |

| Transforms a shallow copy into a deep copy. | |

Static Public Member Functions | |

| static string | _classname_ () |

| static OptionList & | _getOptionList_ () |

| static RemoteMethodMap & | _getRemoteMethodMap_ () |

| static Object * | _new_instance_for_typemap_ () |

| static bool | _isa_ (const Object *o) |

| static void | _static_initialize_ () |

| static const PPath & | declaringFile () |

Public Attributes | |

| real | momentum |

| Momentum factor. | |

| int | down_image_length |

| Length of the down image. | |

| int | down_image_width |

| Width of the down image. | |

| int | up_image_length |

| Length of the up image. | |

| int | up_image_width |

| Width of the up image. | |

| int | kernel_step1 |

| "Vertical" step | |

| int | kernel_step2 |

| "Horizontal" step | |

| Mat | kernel |

| Matrix containing the convolution kernel (filter) | |

| Vec | up_units_bias |

| Element i contains the bias of up unit i. | |

| Vec | down_units_bias |

| Element i contains the bias of down unit i. | |

| int | kernel_length |

| Length of the kernel. | |

| int | kernel_width |

| Width of the kernel. | |

| Mat | kernel_pos_stats |

| Accumulates positive contribution to the weights' gradient. | |

| Mat | kernel_neg_stats |

| Accumulates negative contribution to the weights' gradient. | |

| Vec | up_units_bias_pos_stats |

| Accumulates positive contribution to the gradient of up_units_bias. | |

| Vec | up_units_bias_neg_stats |

| Accumulates negative contribution to the gradient of up_units_bias. | |

| Vec | down_units_bias_pos_stats |

| Accumulates positive contribution to the gradient of down_units_bias. | |

| Vec | down_units_bias_neg_stats |

| Accumulates negative contribution to the gradient of down_units_bias. | |

| Mat | kernel_inc |

| Used if momentum != 0. | |

| Vec | down_units_bias_inc |

| Vec | up_units_bias_inc |

Static Public Attributes | |

| static StaticInitializer | _static_initializer_ |

Static Protected Member Functions | |

| static void | declareOptions (OptionList &ol) |

| Declares the class options. | |

Private Types | |

| typedef RBMParameters | inherited |

Private Member Functions | |

| void | build_ () |

| This does the actual building. | |

Private Attributes | |

| Mat | down_image |

| Mat | up_image |

| Mat | down_image_gradient |

| Mat | up_image_gradient |

| Mat | kernel_gradient |

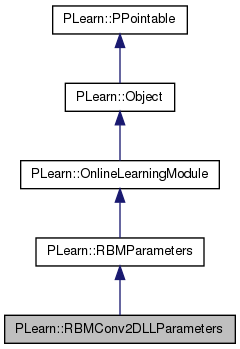

Filter between two linear layers of a 2D convolutional RBM.

Definition at line 55 of file RBMConv2DLLParameters.h.

typedef RBMParameters PLearn::RBMConv2DLLParameters::inherited [private] |

Reimplemented from PLearn::RBMParameters.

Definition at line 57 of file RBMConv2DLLParameters.h.

| PLearn::RBMConv2DLLParameters::RBMConv2DLLParameters | ( | real | the_learning_rate = 0 | ) |

Default constructor.

Definition at line 54 of file RBMConv2DLLParameters.cc.

| PLearn::RBMConv2DLLParameters::RBMConv2DLLParameters | ( | string | down_types, |

| string | up_types, | ||

| real | the_learning_rate = 0 |

||

| ) |

| string PLearn::RBMConv2DLLParameters::_classname_ | ( | ) | [static] |

Reimplemented from PLearn::RBMParameters.

Definition at line 52 of file RBMConv2DLLParameters.cc.

| OptionList & PLearn::RBMConv2DLLParameters::_getOptionList_ | ( | ) | [static] |

Reimplemented from PLearn::RBMParameters.

Definition at line 52 of file RBMConv2DLLParameters.cc.

| RemoteMethodMap & PLearn::RBMConv2DLLParameters::_getRemoteMethodMap_ | ( | ) | [static] |

Reimplemented from PLearn::RBMParameters.

Definition at line 52 of file RBMConv2DLLParameters.cc.

Reimplemented from PLearn::RBMParameters.

Definition at line 52 of file RBMConv2DLLParameters.cc.

| Object * PLearn::RBMConv2DLLParameters::_new_instance_for_typemap_ | ( | ) | [static] |

Reimplemented from PLearn::Object.

Definition at line 52 of file RBMConv2DLLParameters.cc.

| StaticInitializer RBMConv2DLLParameters::_static_initializer_ & PLearn::RBMConv2DLLParameters::_static_initialize_ | ( | ) | [static] |

Reimplemented from PLearn::RBMParameters.

Definition at line 52 of file RBMConv2DLLParameters.cc.

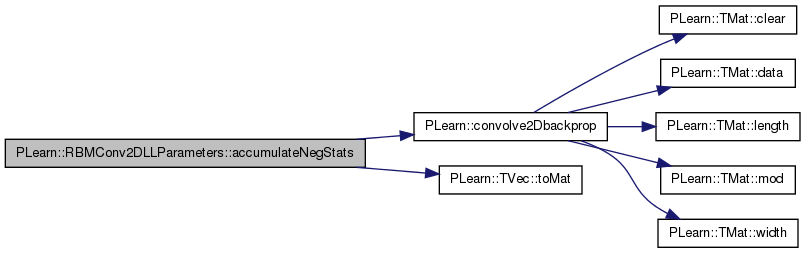

| void PLearn::RBMConv2DLLParameters::accumulateNegStats | ( | const Vec & | down_values, |

| const Vec & | up_values | ||

| ) | [virtual] |

Accumulates negative phase statistics to *_neg_stats.

Implements PLearn::RBMParameters.

Definition at line 250 of file RBMConv2DLLParameters.cc.

References PLearn::convolve2Dbackprop(), down_image, down_image_length, down_image_width, down_units_bias_neg_stats, kernel_neg_stats, kernel_step1, kernel_step2, PLearn::RBMParameters::neg_count, PLearn::TVec< T >::toMat(), up_image, up_image_length, up_image_width, and up_units_bias_neg_stats.

{

down_image = down_values.toMat( down_image_length, down_image_width );

up_image = up_values.toMat( up_image_length, up_image_width );

/* for i=0 to up_image_length:

* for j=0 to up_image_width:

* for l=0 to kernel_length:

* for m=0 to kernel_width:

* kernel_neg_stats(l,m) +=

* down_image(step1*i+l,step2*j+m) * up_image(i,j)

*/

convolve2Dbackprop( down_image, up_image, kernel_neg_stats,

kernel_step1, kernel_step2, true );

down_units_bias_neg_stats += down_values;

up_units_bias_neg_stats += up_values;

neg_count++;

}

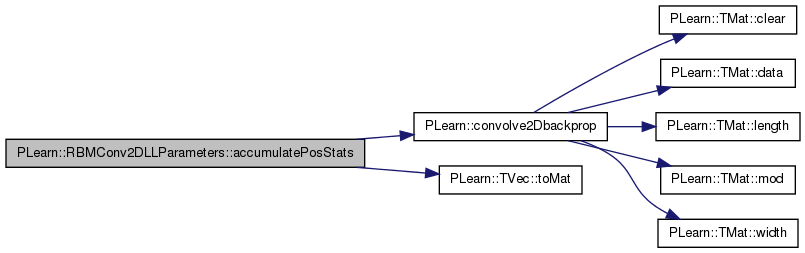

| void PLearn::RBMConv2DLLParameters::accumulatePosStats | ( | const Vec & | down_values, |

| const Vec & | up_values | ||

| ) | [virtual] |

Accumulates positive phase statistics to *_pos_stats.

Implements PLearn::RBMParameters.

Definition at line 228 of file RBMConv2DLLParameters.cc.

References PLearn::convolve2Dbackprop(), down_image, down_image_length, down_image_width, down_units_bias_pos_stats, kernel_pos_stats, kernel_step1, kernel_step2, PLearn::RBMParameters::pos_count, PLearn::TVec< T >::toMat(), up_image, up_image_length, up_image_width, and up_units_bias_pos_stats.

{

down_image = down_values.toMat( down_image_length, down_image_width );

up_image = up_values.toMat( up_image_length, up_image_width );

/* for i=0 to up_image_length:

* for j=0 to up_image_width:

* for l=0 to kernel_length:

* for m=0 to kernel_width:

* kernel_pos_stats(l,m) +=

* down_image(step1*i+l,step2*j+m) * up_image(i,j)

*/

convolve2Dbackprop( down_image, up_image, kernel_pos_stats,

kernel_step1, kernel_step2, true );

down_units_bias_pos_stats += down_values;

up_units_bias_pos_stats += up_values;

pos_count++;

}

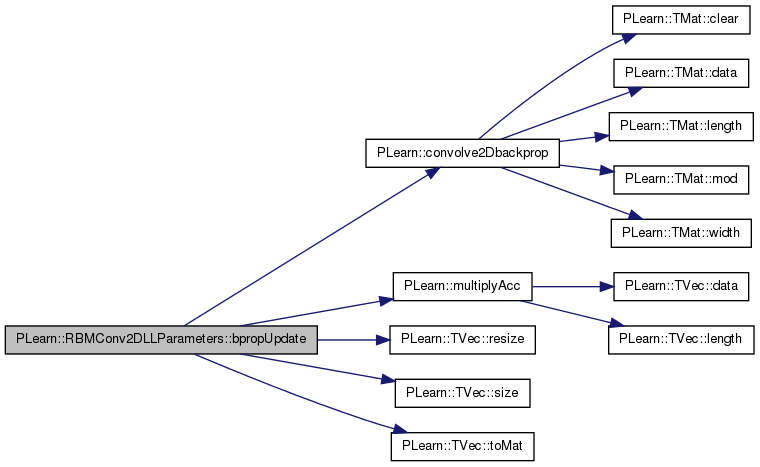

| void PLearn::RBMConv2DLLParameters::bpropUpdate | ( | const Vec & | input, |

| const Vec & | output, | ||

| Vec & | input_gradient, | ||

| const Vec & | output_gradient | ||

| ) | [virtual] |

Adapt based on the output gradient: this method should only be called just after a corresponding fprop; it should be called with the same arguments as fprop for the first two arguments (and output should not have been modified since then).

this version allows to obtain the input gradient as well

Since sub-classes are supposed to learn ONLINE, the object is 'ready-to-be-used' just after any bpropUpdate. N.B. A DEFAULT IMPLEMENTATION IS PROVIDED IN THE SUPER-CLASS, WHICH JUST CALLS bpropUpdate(input, output, input_gradient, output_gradient) AND IGNORES INPUT GRADIENT. this version allows to obtain the input gradient as well N.B. THE DEFAULT IMPLEMENTATION IN SUPER-CLASS JUST RAISES A PLERROR.

Definition at line 615 of file RBMConv2DLLParameters.cc.

References PLearn::convolve2Dbackprop(), down_image, down_image_gradient, down_image_length, down_image_width, PLearn::RBMParameters::down_layer_size, kernel, kernel_gradient, kernel_step1, kernel_step2, PLearn::RBMParameters::learning_rate, PLearn::multiplyAcc(), PLASSERT, PLearn::TVec< T >::resize(), PLearn::TVec< T >::size(), PLearn::TVec< T >::toMat(), up_image, up_image_gradient, up_image_length, up_image_width, PLearn::RBMParameters::up_layer_size, and up_units_bias.

{

PLASSERT( input.size() == down_layer_size );

PLASSERT( output.size() == up_layer_size );

PLASSERT( output_gradient.size() == up_layer_size );

input_gradient.resize( down_layer_size );

down_image = input.toMat( down_image_length, down_image_width );

up_image = output.toMat( up_image_length, up_image_width );

down_image_gradient = input_gradient.toMat( down_image_length,

down_image_width );

up_image_gradient = output_gradient.toMat( up_image_length,

up_image_width );

// update input_gradient and kernel_gradient

convolve2Dbackprop( down_image, kernel,

up_image_gradient, down_image_gradient,

kernel_gradient,

kernel_step1, kernel_step2, false );

// kernel -= learning_rate * kernel_gradient

multiplyAcc( kernel, kernel_gradient, -learning_rate );

// (up) bias -= learning_rate * output_gradient

multiplyAcc( up_units_bias, output_gradient, -learning_rate );

}

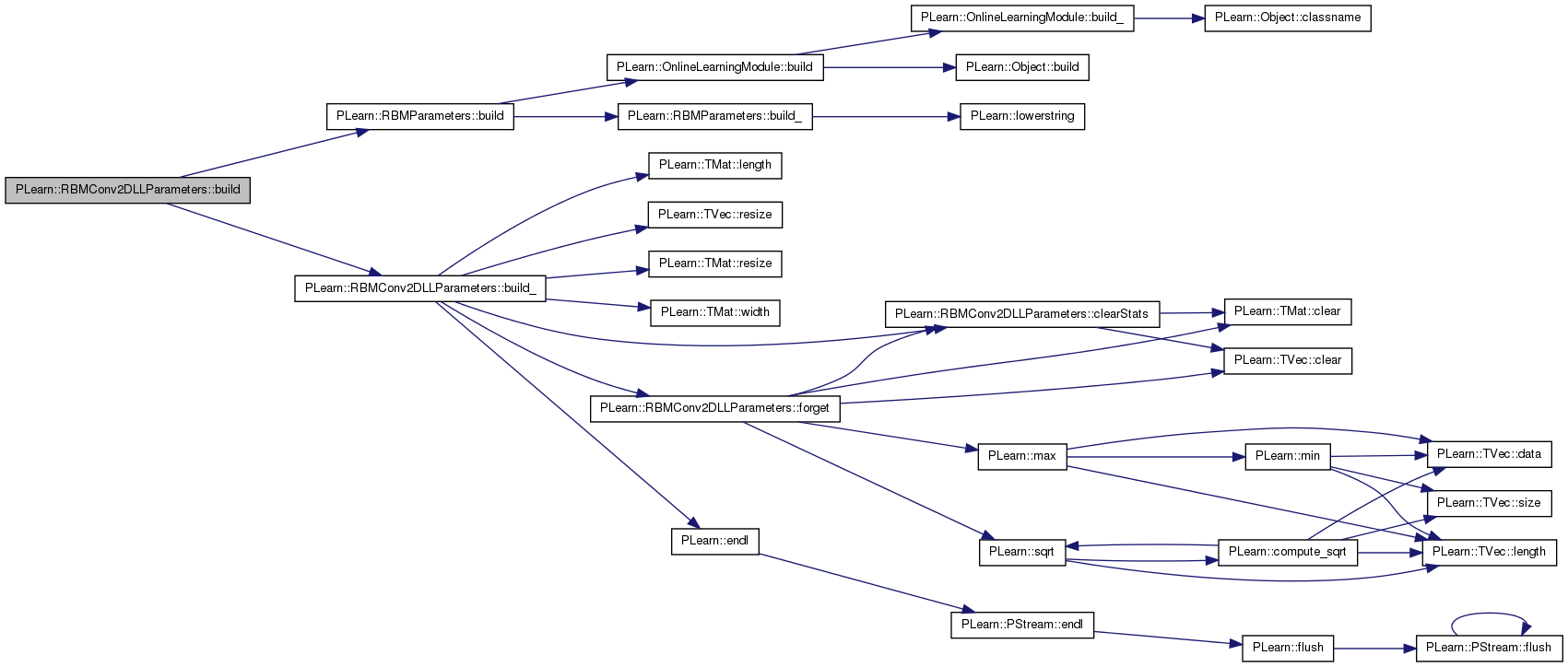

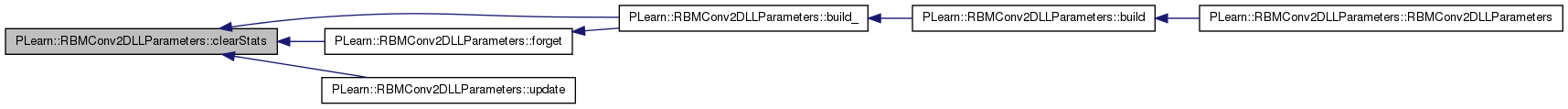

| void PLearn::RBMConv2DLLParameters::build | ( | ) | [virtual] |

Post-constructor.

The normal implementation should call simply inherited::build(), then this class's build_(). This method should be callable again at later times, after modifying some option fields to change the "architecture" of the object.

Reimplemented from PLearn::RBMParameters.

Definition at line 198 of file RBMConv2DLLParameters.cc.

References PLearn::RBMParameters::build(), and build_().

Referenced by RBMConv2DLLParameters().

{

inherited::build();

build_();

}

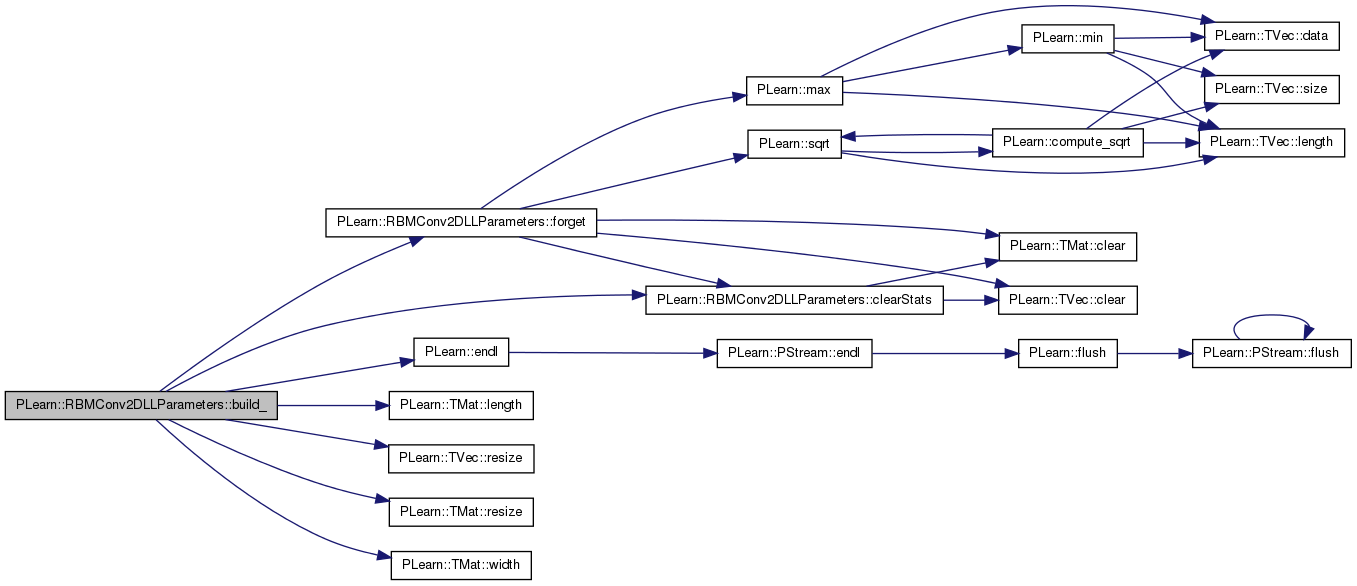

| void PLearn::RBMConv2DLLParameters::build_ | ( | ) | [private] |

This does the actual building.

Reimplemented from PLearn::RBMParameters.

Definition at line 122 of file RBMConv2DLLParameters.cc.

References clearStats(), down_image_length, down_image_width, PLearn::RBMParameters::down_layer_size, down_units_bias, down_units_bias_inc, down_units_bias_neg_stats, down_units_bias_pos_stats, PLearn::RBMParameters::down_units_types, PLearn::endl(), forget(), i, kernel, kernel_gradient, kernel_inc, kernel_length, kernel_neg_stats, kernel_pos_stats, kernel_step1, kernel_step2, kernel_width, PLearn::TMat< T >::length(), momentum, PLearn::OnlineLearningModule::output_size, PLASSERT, PLERROR, PLearn::TVec< T >::resize(), PLearn::TMat< T >::resize(), up_image_length, up_image_width, PLearn::RBMParameters::up_layer_size, up_units_bias, up_units_bias_inc, up_units_bias_neg_stats, up_units_bias_pos_stats, PLearn::RBMParameters::up_units_types, and PLearn::TMat< T >::width().

Referenced by build().

{

MODULE_LOG << "build_() called" << endl;

if( up_layer_size == 0 || down_layer_size == 0 )

{

MODULE_LOG << "build_() aborted" << endl;

return;

}

PLASSERT( down_image_length > 0 );

PLASSERT( down_image_width > 0 );

PLASSERT( down_image_length * down_image_width == down_layer_size );

PLASSERT( up_image_length > 0 );

PLASSERT( up_image_width > 0 );

PLASSERT( up_image_length * up_image_width == up_layer_size );

PLASSERT( kernel_step1 > 0 );

PLASSERT( kernel_step2 > 0 );

kernel_length = down_image_length - kernel_step1 * (up_image_length-1);

PLASSERT( kernel_length > 0 );

kernel_width = down_image_width - kernel_step2 * (up_image_width-1);

PLASSERT( kernel_width > 0 );

output_size = 0;

bool needs_forget = false; // do we need to reinitialize the parameters?

if( kernel.length() != kernel_length ||

kernel.width() != kernel_width )

{

kernel.resize( kernel_length, kernel_width );

needs_forget = true;

}

kernel_pos_stats.resize( kernel_length, kernel_width );

kernel_neg_stats.resize( kernel_length, kernel_width );

kernel_gradient.resize( kernel_length, kernel_width );

down_units_bias.resize( down_layer_size );

down_units_bias_pos_stats.resize( down_layer_size );

down_units_bias_neg_stats.resize( down_layer_size );

for( int i=0 ; i<down_layer_size ; i++ )

{

char dut_i = down_units_types[i];

if( dut_i != 'l' ) // not linear activation unit

PLERROR( "RBMConv2DLLParameters::build_() - value '%c' for"

" down_units_types[%d]\n"

"should be 'l'.\n",

dut_i, i );

}

up_units_bias.resize( up_layer_size );

up_units_bias_pos_stats.resize( up_layer_size );

up_units_bias_neg_stats.resize( up_layer_size );

for( int i=0 ; i<up_layer_size ; i++ )

{

char uut_i = up_units_types[i];

if( uut_i != 'l' ) // not linear activation unit

PLERROR( "RBMConv2DLLParameters::build_() - value '%c' for"

" up_units_types[%d]\n"

"should be 'l'.\n",

uut_i, i );

}

if( momentum != 0. )

{

kernel_inc.resize( kernel_length, kernel_width );

down_units_bias_inc.resize( down_layer_size );

up_units_bias_inc.resize( up_layer_size );

}

if( needs_forget )

forget();

clearStats();

}

| string PLearn::RBMConv2DLLParameters::classname | ( | ) | const [virtual] |

Reimplemented from PLearn::Object.

Definition at line 52 of file RBMConv2DLLParameters.cc.

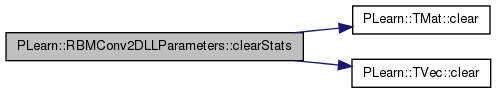

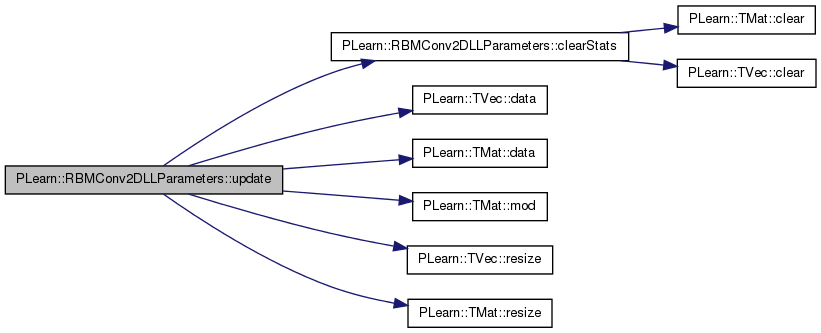

| void PLearn::RBMConv2DLLParameters::clearStats | ( | ) | [virtual] |

Clear all information accumulated during stats.

Implements PLearn::RBMParameters.

Definition at line 537 of file RBMConv2DLLParameters.cc.

References PLearn::TMat< T >::clear(), PLearn::TVec< T >::clear(), down_units_bias_neg_stats, down_units_bias_pos_stats, kernel_neg_stats, kernel_pos_stats, PLearn::RBMParameters::neg_count, PLearn::RBMParameters::pos_count, up_units_bias_neg_stats, and up_units_bias_pos_stats.

Referenced by build_(), forget(), and update().

{

kernel_pos_stats.clear();

kernel_neg_stats.clear();

down_units_bias_pos_stats.clear();

down_units_bias_neg_stats.clear();

up_units_bias_pos_stats.clear();

up_units_bias_neg_stats.clear();

pos_count = 0;

neg_count = 0;

}

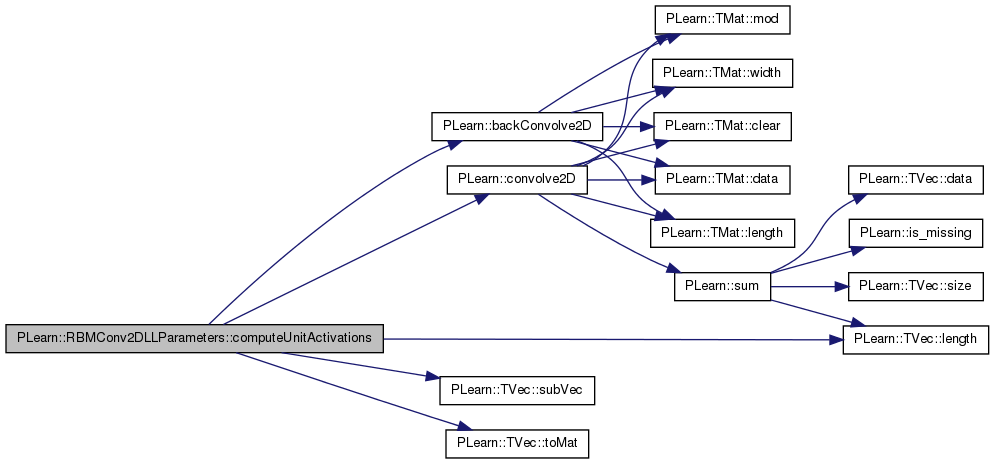

| void PLearn::RBMConv2DLLParameters::computeUnitActivations | ( | int | start, |

| int | length, | ||

| const Vec & | activations | ||

| ) | const [virtual] |

Computes the vectors of activation of "length" units, starting from "start", and concatenates them into "activations".

"start" indexes an up unit if "going_up", else a down unit.

Implements PLearn::RBMParameters.

Definition at line 553 of file RBMConv2DLLParameters.cc.

References PLearn::backConvolve2D(), PLearn::convolve2D(), PLearn::TVec< T >::length(), m, PLASSERT, PLearn::TVec< T >::subVec(), and PLearn::TVec< T >::toMat().

{

// Unoptimized way, that computes all the activations and return a subvec

PLASSERT( activations.length() == length );

if( going_up )

{

PLASSERT( start+length <= up_layer_size );

down_image = input_vec.toMat( down_image_length, down_image_width );

// special cases:

if( length == 1 )

{

real act = 0;

real* k = kernel.data();

real* di = down_image.data()

+ kernel_step1*(start / down_image_width)

+ kernel_step2*(start % down_image_width);

for( int l=0; l<kernel_length; l++, di+=down_image_width )

for( int m=0; m<kernel_width; m++ )

act += di[m] * k[m];

activations[0] = act;

}

else if( start == 0 && length == up_layer_size )

{

up_image = activations.toMat( up_image_length, up_image_width );

convolve2D( down_image, kernel, up_image,

kernel_step1, kernel_step2, false );

}

else

{

up_image = Mat( up_image_length, up_image_width );

convolve2D( down_image, kernel, up_image,

kernel_step1, kernel_step2, false );

activations << up_image.toVec().subVec( start, length );

}

activations += up_units_bias.subVec(start, length);

}

else

{

PLASSERT( start+length <= down_layer_size );

up_image = input_vec.toMat( up_image_length, up_image_width );

// special cases

if( start == 0 && length == down_layer_size )

{

down_image = activations.toMat( down_image_length,

down_image_width );

backConvolve2D( down_image, kernel, up_image,

kernel_step1, kernel_step2, false );

}

else

{

down_image = Mat( down_image_length, down_image_width );

backConvolve2D( down_image, kernel, up_image,

kernel_step1, kernel_step2, false );

activations << down_image.toVec().subVec( start, length );

}

activations += down_units_bias.subVec(start, length);

}

}

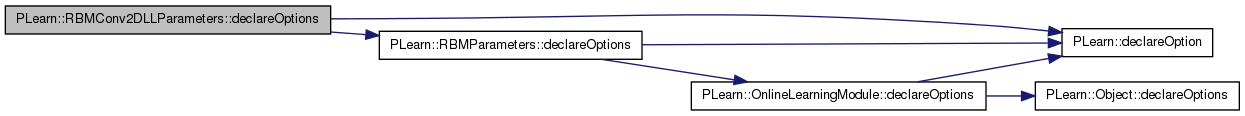

| void PLearn::RBMConv2DLLParameters::declareOptions | ( | OptionList & | ol | ) | [static, protected] |

Declares the class options.

Reimplemented from PLearn::RBMParameters.

Definition at line 70 of file RBMConv2DLLParameters.cc.

References PLearn::OptionBase::buildoption, PLearn::declareOption(), PLearn::RBMParameters::declareOptions(), down_image_length, down_image_width, down_units_bias, kernel, kernel_step1, kernel_step2, PLearn::OptionBase::learntoption, momentum, up_image_length, up_image_width, and up_units_bias.

{

declareOption(ol, "momentum", &RBMConv2DLLParameters::momentum,

OptionBase::buildoption,

"Momentum factor (should be between 0 and 1)");

declareOption(ol, "down_image_length",

&RBMConv2DLLParameters::down_image_length,

OptionBase::buildoption,

"Length of the down image");

declareOption(ol, "down_image_width",

&RBMConv2DLLParameters::down_image_width,

OptionBase::buildoption,

"Width of the down image");

declareOption(ol, "up_image_length",

&RBMConv2DLLParameters::up_image_length,

OptionBase::buildoption,

"Length of the up image");

declareOption(ol, "up_image_width",

&RBMConv2DLLParameters::up_image_width,

OptionBase::buildoption,

"Width of the up image");

declareOption(ol, "kernel_step1", &RBMConv2DLLParameters::kernel_step1,

OptionBase::buildoption,

"\"Vertical\" step of the convolution");

declareOption(ol, "kernel_step2", &RBMConv2DLLParameters::kernel_step2,

OptionBase::buildoption,

"\"Horizontal\" step of the convolution");

declareOption(ol, "kernel", &RBMConv2DLLParameters::kernel,

OptionBase::learntoption,

"Matrix containing the convolution kernel (filter)");

declareOption(ol, "up_units_bias",

&RBMConv2DLLParameters::up_units_bias,

OptionBase::learntoption,

"Element i contains the bias of up unit i");

declareOption(ol, "down_units_bias",

&RBMConv2DLLParameters::down_units_bias,

OptionBase::learntoption,

"Element i contains the bias of down unit i");

// Now call the parent class' declareOptions

inherited::declareOptions(ol);

}

| static const PPath& PLearn::RBMConv2DLLParameters::declaringFile | ( | ) | [inline, static] |

Reimplemented from PLearn::RBMParameters.

Definition at line 204 of file RBMConv2DLLParameters.h.

:

//##### Protected Member Functions ######################################

| RBMConv2DLLParameters * PLearn::RBMConv2DLLParameters::deepCopy | ( | CopiesMap & | copies | ) | const [virtual] |

Reimplemented from PLearn::RBMParameters.

Definition at line 52 of file RBMConv2DLLParameters.cc.

| void PLearn::RBMConv2DLLParameters::forget | ( | ) | [virtual] |

reset the parameters to the state they would be BEFORE starting training.

Note that this method is necessarily called from build().

Implements PLearn::OnlineLearningModule.

Definition at line 647 of file RBMConv2DLLParameters.cc.

References PLearn::TVec< T >::clear(), PLearn::TMat< T >::clear(), clearStats(), d, PLearn::RBMParameters::down_layer_size, down_units_bias, PLearn::RBMParameters::initialization_method, kernel, PLearn::max(), PLearn::OnlineLearningModule::random_gen, PLearn::sqrt(), PLearn::RBMParameters::up_layer_size, and up_units_bias.

Referenced by build_().

{

if( initialization_method == "zero" )

kernel.clear();

else

{

if( !random_gen )

random_gen = new PRandom();

real d = 1. / max( down_layer_size, up_layer_size );

if( initialization_method == "uniform_sqrt" )

d = sqrt( d );

random_gen->fill_random_uniform( kernel, -d, d );

}

down_units_bias.clear();

up_units_bias.clear();

clearStats();

}

| OptionList & PLearn::RBMConv2DLLParameters::getOptionList | ( | ) | const [virtual] |

Reimplemented from PLearn::Object.

Definition at line 52 of file RBMConv2DLLParameters.cc.

| OptionMap & PLearn::RBMConv2DLLParameters::getOptionMap | ( | ) | const [virtual] |

Reimplemented from PLearn::Object.

Definition at line 52 of file RBMConv2DLLParameters.cc.

| RemoteMethodMap & PLearn::RBMConv2DLLParameters::getRemoteMethodMap | ( | ) | const [virtual] |

Reimplemented from PLearn::Object.

Definition at line 52 of file RBMConv2DLLParameters.cc.

| void PLearn::RBMConv2DLLParameters::makeDeepCopyFromShallowCopy | ( | CopiesMap & | copies | ) | [virtual] |

Transforms a shallow copy into a deep copy.

Reimplemented from PLearn::RBMParameters.

Definition at line 205 of file RBMConv2DLLParameters.cc.

References PLearn::deepCopyField(), down_image, down_image_gradient, down_units_bias, down_units_bias_inc, down_units_bias_neg_stats, down_units_bias_pos_stats, kernel, kernel_gradient, kernel_inc, kernel_neg_stats, kernel_pos_stats, PLearn::RBMParameters::makeDeepCopyFromShallowCopy(), up_image, up_image_gradient, up_units_bias, up_units_bias_inc, up_units_bias_neg_stats, and up_units_bias_pos_stats.

{

inherited::makeDeepCopyFromShallowCopy(copies);

deepCopyField(kernel, copies);

deepCopyField(up_units_bias, copies);

deepCopyField(down_units_bias, copies);

deepCopyField(kernel_pos_stats, copies);

deepCopyField(kernel_neg_stats, copies);

deepCopyField(kernel_gradient, copies);

deepCopyField(up_units_bias_pos_stats, copies);

deepCopyField(up_units_bias_neg_stats, copies);

deepCopyField(down_units_bias_pos_stats, copies);

deepCopyField(down_units_bias_neg_stats, copies);

deepCopyField(kernel_inc, copies);

deepCopyField(up_units_bias_inc, copies);

deepCopyField(down_units_bias_inc, copies);

deepCopyField(down_image, copies);

deepCopyField(up_image, copies);

deepCopyField(down_image_gradient, copies);

deepCopyField(up_image_gradient, copies);

}

| Vec PLearn::RBMConv2DLLParameters::makeParametersPointHere | ( | const Vec & | global_parameters, |

| bool | share_up_params, | ||

| bool | share_down_params | ||

| ) | [virtual] |

Make the parameters data be sub-vectors of the given global_parameters.

The argument should have size >= nParameters. The result is a Vec that starts just after this object's parameters end, i.e. result = global_parameters.subVec(nParameters(),global_parameters.size()-nParameters()); This allows to easily chain calls of this method on multiple RBMParameters.

Implements PLearn::RBMParameters.

Definition at line 692 of file RBMConv2DLLParameters.cc.

References PLearn::TVec< T >::data(), down_units_bias, kernel, m, PLearn::TMat< T >::makeSharedValue(), PLearn::TVec< T >::makeSharedValue(), n, PLERROR, PLearn::TVec< T >::size(), PLearn::TMat< T >::size(), PLearn::TVec< T >::subVec(), and up_units_bias.

{

int n1=kernel.size();

int n2=up_units_bias.size();

int n3=down_units_bias.size();

int n = n1+(share_up_params?n2:0)+(share_down_params?n3:0); // should be = nParameters()

int m = global_parameters.size();

if (m<n)

PLERROR("RBMConv2DLLParameters::makeParametersPointHere: argument has length %d, should be longer than nParameters()=%d",m,n);

real* p = global_parameters.data();

kernel.makeSharedValue(p,n1);

p+=n1;

if (share_up_params)

{

up_units_bias.makeSharedValue(p,n2);

p+=n2;

}

if (share_down_params)

down_units_bias.makeSharedValue(p,n3);

return global_parameters.subVec(n,m-n);

}

| int PLearn::RBMConv2DLLParameters::nParameters | ( | bool | share_up_params, |

| bool | share_down_params | ||

| ) | const [virtual] |

optionally perform some processing after training, or after a series of fprop/bpropUpdate calls to prepare the model for truly out-of-sample operation.

return the number of parameters

THE DEFAULT IMPLEMENTATION PROVIDED IN THE SUPER-CLASS DOES NOT DO ANYTHING. return the number of parameters

Implements PLearn::RBMParameters.

Definition at line 681 of file RBMConv2DLLParameters.cc.

References down_units_bias, kernel, PLearn::TVec< T >::size(), PLearn::TMat< T >::size(), and up_units_bias.

{

return kernel.size() + (share_up_params?up_units_bias.size():0) +

(share_down_params?down_units_bias.size():0);

}

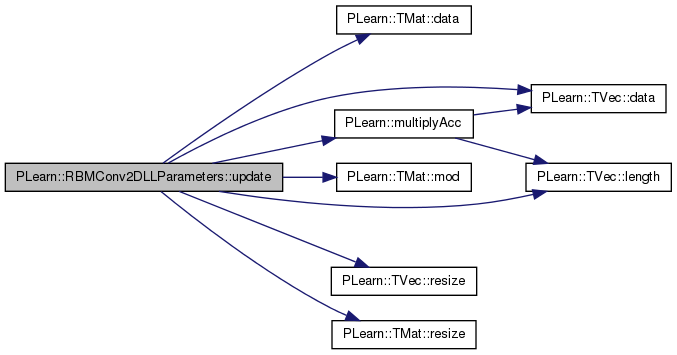

| void PLearn::RBMConv2DLLParameters::update | ( | ) | [virtual] |

Updates parameters according to contrastive divergence gradient.

Implements PLearn::RBMParameters.

Definition at line 271 of file RBMConv2DLLParameters.cc.

References clearStats(), PLearn::TVec< T >::data(), PLearn::TMat< T >::data(), PLearn::RBMParameters::down_layer_size, down_units_bias, down_units_bias_inc, down_units_bias_neg_stats, down_units_bias_pos_stats, i, j, kernel, kernel_inc, kernel_length, kernel_neg_stats, kernel_pos_stats, kernel_width, PLearn::RBMParameters::learning_rate, PLearn::TMat< T >::mod(), momentum, PLearn::RBMParameters::neg_count, PLearn::RBMParameters::pos_count, PLearn::TVec< T >::resize(), PLearn::TMat< T >::resize(), PLearn::RBMParameters::up_layer_size, up_units_bias, up_units_bias_inc, up_units_bias_neg_stats, and up_units_bias_pos_stats.

{

// updates parameters

// kernel -= learning_rate * (kernel_pos_stats/pos_count

// - kernel_neg_stats/neg_count)

real pos_factor = -learning_rate / pos_count;

real neg_factor = learning_rate / neg_count;

real* k_i = kernel.data();

real* kps_i = kernel_pos_stats.data();

real* kns_i = kernel_neg_stats.data();

int k_mod = kernel.mod();

int kps_mod = kernel_pos_stats.mod();

int kns_mod = kernel_neg_stats.mod();

if( momentum == 0. )

{

// no need to use weights_inc

for( int i=0 ; i<kernel_length ; i++, k_i+=k_mod,

kps_i+=kps_mod, kns_i+=kns_mod )

for( int j=0 ; j<kernel_width ; j++ )

k_i[j] += pos_factor * kps_i[j] + neg_factor * kns_i[j];

}

else

{

// ensure that weights_inc has the right size

kernel_inc.resize( kernel_length, kernel_width );

// The update rule becomes:

// kernel_inc = momentum * kernel_inc

// - learning_rate * (kernel_pos_stats/pos_count

// - kernel_neg_stats/neg_count);

// kernel += kernel_inc;

real* kinc_i = kernel_inc.data();

int kinc_mod = kernel_inc.mod();

for( int i=0 ; i<kernel_length ; i++, k_i += k_mod, kps_i += kps_mod,

kns_i += kns_mod, kinc_i += kinc_mod )

for( int j=0 ; j<kernel_width ; j++ )

{

kinc_i[j] = momentum * kinc_i[j]

+ pos_factor * kps_i[j] + neg_factor * kns_i[j];

k_i[j] += kinc_i[j];

}

}

// down_units_bias -= learning_rate * (down_units_bias_pos_stats/pos_count

// -down_units_bias_neg_stats/neg_count)

real* dub = down_units_bias.data();

real* dubps = down_units_bias_pos_stats.data();

real* dubns = down_units_bias_neg_stats.data();

if( momentum == 0. )

{

// no need to use down_units_bias_inc

for( int i=0 ; i<down_layer_size ; i++ )

dub[i] += pos_factor * dubps[i] + neg_factor * dubns[i];

}

else

{

// ensure that down_units_bias_inc has the right size

down_units_bias_inc.resize( down_layer_size );

// The update rule becomes:

// down_units_bias_inc =

// momentum * down_units_bias_inc

// - learning_rate * (down_units_bias_pos_stats/pos_count

// -down_units_bias_neg_stats/neg_count);

// down_units_bias += down_units_bias_inc;

real* dubinc = down_units_bias_inc.data();

for( int i=0 ; i<down_layer_size ; i++ )

{

dubinc[i] = momentum * dubinc[i]

+ pos_factor * dubps[i] + neg_factor * dubns[i];

dub[i] += dubinc[i];

}

}

// up_units_bias -= learning_rate * (up_units_bias_pos_stats/pos_count

// -up_units_bias_neg_stats/neg_count)

real* uub = up_units_bias.data();

real* uubps = up_units_bias_pos_stats.data();

real* uubns = up_units_bias_neg_stats.data();

if( momentum == 0. )

{

// no need to use up_units_bias_inc

for( int i=0 ; i<up_layer_size ; i++ )

uub[i] += pos_factor * uubps[i] + neg_factor * uubns[i];

}

else

{

// ensure that up_units_bias_inc has the right size

up_units_bias_inc.resize( up_layer_size );

// The update rule becomes:

// up_units_bias_inc =

// momentum * up_units_bias_inc

// - learning_rate * (up_units_bias_pos_stats/pos_count

// -up_units_bias_neg_stats/neg_count);

// up_units_bias += up_units_bias_inc;

real* uubinc = up_units_bias_inc.data();

for( int i=0 ; i<up_layer_size ; i++ )

{

uubinc[i] = momentum * uubinc[i]

+ pos_factor * uubps[i] + neg_factor * uubns[i];

uub[i] += uubinc[i];

}

}

clearStats();

}

| void PLearn::RBMConv2DLLParameters::update | ( | const Vec & | pos_down_values, |

| const Vec & | pos_up_values, | ||

| const Vec & | neg_down_values, | ||

| const Vec & | neg_up_values | ||

| ) | [virtual] |

Updates parameters according to contrastive divergence gradient, not using the statistics but the explicit values passed.

Reimplemented from PLearn::RBMParameters.

Definition at line 384 of file RBMConv2DLLParameters.cc.

References PLearn::TMat< T >::data(), PLearn::TVec< T >::data(), down_image_length, down_image_width, PLearn::RBMParameters::down_layer_size, down_units_bias, down_units_bias_inc, i, j, kernel, kernel_inc, kernel_length, kernel_step1, kernel_step2, kernel_width, PLearn::RBMParameters::learning_rate, PLearn::TVec< T >::length(), m, PLearn::TMat< T >::mod(), momentum, PLearn::multiplyAcc(), PLASSERT, PLearn::TVec< T >::resize(), PLearn::TMat< T >::resize(), up_image_length, up_image_width, PLearn::RBMParameters::up_layer_size, up_units_bias, and up_units_bias_inc.

{

PLASSERT( pos_up_values.length() == up_layer_size );

PLASSERT( neg_up_values.length() == up_layer_size );

PLASSERT( pos_down_values.length() == down_layer_size );

PLASSERT( neg_down_values.length() == down_layer_size );

/* for i=0 to up_image_length:

* for j=0 to up_image_width:

* for l=0 to kernel_length:

* for m=0 to kernel_width:

* kernel_neg_stats(l,m) -= learning_rate *

* ( pos_down_image(step1*i+l,step2*j+m) * pos_up_image(i,j)

* - neg_down_image(step1*i+l,step2*j+m) * neg_up_image(i,j) )

*/

real* puv = pos_up_values.data();

real* nuv = neg_up_values.data();

real* pdv = pos_down_values.data();

real* ndv = neg_down_values.data();

int k_mod = kernel.mod();

if( momentum == 0. )

{

for( int i=0; i<up_image_length; i++,

puv+=up_image_width,

nuv+=up_image_width,

pdv+=kernel_step1*down_image_width,

ndv+=kernel_step1*down_image_width )

{

// copies to iterate over columns

real* pdv1 = pdv;

real* ndv1 = ndv;

for( int j=0; j<up_image_width; j++,

pdv1+=kernel_step2,

ndv1+=kernel_step2 )

{

real* k = kernel.data();

real* pdv2 = pdv1; // copy to iterate over sub-rows

real* ndv2 = ndv1;

real puv_ij = puv[j];

real nuv_ij = nuv[j];

for( int l=0; l<kernel_length; l++, k+=k_mod,

pdv2+=down_image_width,

ndv2+=down_image_width )

for( int m=0; m<kernel_width; m++ )

k[m] += learning_rate *

(ndv2[m] * nuv_ij - pdv2[m] * puv_ij);

}

}

}

else

{

// ensure that weights_inc has the right size

kernel_inc.resize( kernel_length, kernel_width );

kernel_inc *= momentum;

int kinc_mod = kernel_inc.mod();

for( int i=0; i<down_image_length; i++,

puv+=up_image_width,

nuv+=up_image_width,

pdv+=kernel_step1*down_image_width,

ndv+=kernel_step1*down_image_width )

{

// copies to iterate over columns

real* pdv1 = pdv;

real* ndv1 = ndv;

for( int j=0; j<down_image_width; j++,

pdv1+=kernel_step2,

ndv1+=kernel_step2 )

{

real* kinc = kernel_inc.data();

real* pdv2 = pdv1; // copy to iterate over sub-rows

real* ndv2 = ndv1;

real puv_ij = puv[j];

real nuv_ij = nuv[j];

for( int l=0; l<kernel_length; l++, kinc+=kinc_mod,

pdv2+=down_image_width,

ndv2+=down_image_width )

for( int m=0; m<kernel_width; m++ )

kinc[m] += ndv2[m] * nuv_ij - pdv2[m] * puv_ij;

}

}

multiplyAcc( kernel, kernel_inc, learning_rate );

}

// down_units_bias -= learning_rate * ( v_0 - v_1 )

real* dub = down_units_bias.data();

// pdv and ndv didn't change since last time

// real* pdv = pos_down_values.data();

// real* ndv = neg_down_values.data();

if( momentum == 0. )

{

// no need to use down_units_bias_inc

for( int j=0 ; j<down_layer_size ; j++ )

dub[j] += learning_rate * ( ndv[j] - pdv[j] );

}

else

{

// ensure that down_units_bias_inc has the right size

down_units_bias_inc.resize( down_layer_size );

// The update rule becomes:

// down_units_bias_inc = momentum * down_units_bias_inc

// - learning_rate * ( v_0 - v_1 )

// down_units_bias += down_units_bias_inc;

real* dubinc = down_units_bias_inc.data();

for( int j=0 ; j<down_layer_size ; j++ )

{

dubinc[j] = momentum * dubinc[j]

+ learning_rate * ( ndv[j] - pdv[j] );

dub[j] += dubinc[j];

}

}

// up_units_bias -= learning_rate * ( h_0 - h_1 )

real* uub = up_units_bias.data();

puv = pos_up_values.data();

nuv = neg_up_values.data();

if( momentum == 0. )

{

// no need to use up_units_bias_inc

for( int i=0 ; i<up_layer_size ; i++ )

uub[i] += learning_rate * (nuv[i] - puv[i] );

}

else

{

// ensure that up_units_bias_inc has the right size

up_units_bias_inc.resize( up_layer_size );

// The update rule becomes:

// up_units_bias_inc =

// momentum * up_units_bias_inc

// - learning_rate * (up_units_bias_pos_stats/pos_count

// -up_units_bias_neg_stats/neg_count);

// up_units_bias += up_units_bias_inc;

real* uubinc = up_units_bias_inc.data();

for( int i=0 ; i<up_layer_size ; i++ )

{

uubinc[i] = momentum * uubinc[i]

+ learning_rate * ( nuv[i] - puv[i] );

uub[i] += uubinc[i];

}

}

}

Reimplemented from PLearn::RBMParameters.

Definition at line 204 of file RBMConv2DLLParameters.h.

Mat PLearn::RBMConv2DLLParameters::down_image [mutable, private] |

Definition at line 228 of file RBMConv2DLLParameters.h.

Referenced by accumulateNegStats(), accumulatePosStats(), bpropUpdate(), and makeDeepCopyFromShallowCopy().

Mat PLearn::RBMConv2DLLParameters::down_image_gradient [mutable, private] |

Definition at line 230 of file RBMConv2DLLParameters.h.

Referenced by bpropUpdate(), and makeDeepCopyFromShallowCopy().

Length of the down image.

Definition at line 66 of file RBMConv2DLLParameters.h.

Referenced by accumulateNegStats(), accumulatePosStats(), bpropUpdate(), build_(), declareOptions(), and update().

Width of the down image.

Definition at line 69 of file RBMConv2DLLParameters.h.

Referenced by accumulateNegStats(), accumulatePosStats(), bpropUpdate(), build_(), declareOptions(), and update().

Element i contains the bias of down unit i.

Definition at line 92 of file RBMConv2DLLParameters.h.

Referenced by build_(), declareOptions(), forget(), makeDeepCopyFromShallowCopy(), makeParametersPointHere(), nParameters(), and update().

Definition at line 119 of file RBMConv2DLLParameters.h.

Referenced by build_(), makeDeepCopyFromShallowCopy(), and update().

Accumulates negative contribution to the gradient of down_units_bias.

Definition at line 115 of file RBMConv2DLLParameters.h.

Referenced by accumulateNegStats(), build_(), clearStats(), makeDeepCopyFromShallowCopy(), and update().

Accumulates positive contribution to the gradient of down_units_bias.

Definition at line 113 of file RBMConv2DLLParameters.h.

Referenced by accumulatePosStats(), build_(), clearStats(), makeDeepCopyFromShallowCopy(), and update().

Matrix containing the convolution kernel (filter)

Definition at line 86 of file RBMConv2DLLParameters.h.

Referenced by bpropUpdate(), build_(), declareOptions(), forget(), makeDeepCopyFromShallowCopy(), makeParametersPointHere(), nParameters(), and update().

Definition at line 232 of file RBMConv2DLLParameters.h.

Referenced by bpropUpdate(), build_(), and makeDeepCopyFromShallowCopy().

Used if momentum != 0.

Definition at line 118 of file RBMConv2DLLParameters.h.

Referenced by build_(), makeDeepCopyFromShallowCopy(), and update().

Length of the kernel.

Definition at line 97 of file RBMConv2DLLParameters.h.

Accumulates negative contribution to the weights' gradient.

Definition at line 106 of file RBMConv2DLLParameters.h.

Referenced by accumulateNegStats(), build_(), clearStats(), makeDeepCopyFromShallowCopy(), and update().

Accumulates positive contribution to the weights' gradient.

Definition at line 103 of file RBMConv2DLLParameters.h.

Referenced by accumulatePosStats(), build_(), clearStats(), makeDeepCopyFromShallowCopy(), and update().

"Vertical" step

Definition at line 78 of file RBMConv2DLLParameters.h.

Referenced by accumulateNegStats(), accumulatePosStats(), bpropUpdate(), build_(), declareOptions(), and update().

"Horizontal" step

Definition at line 81 of file RBMConv2DLLParameters.h.

Referenced by accumulateNegStats(), accumulatePosStats(), bpropUpdate(), build_(), declareOptions(), and update().

Width of the kernel.

Definition at line 100 of file RBMConv2DLLParameters.h.

Momentum factor.

Definition at line 63 of file RBMConv2DLLParameters.h.

Referenced by build_(), declareOptions(), and update().

Mat PLearn::RBMConv2DLLParameters::up_image [mutable, private] |

Definition at line 229 of file RBMConv2DLLParameters.h.

Referenced by accumulateNegStats(), accumulatePosStats(), bpropUpdate(), and makeDeepCopyFromShallowCopy().

Mat PLearn::RBMConv2DLLParameters::up_image_gradient [mutable, private] |

Definition at line 231 of file RBMConv2DLLParameters.h.

Referenced by bpropUpdate(), and makeDeepCopyFromShallowCopy().

Length of the up image.

Definition at line 72 of file RBMConv2DLLParameters.h.

Referenced by accumulateNegStats(), accumulatePosStats(), bpropUpdate(), build_(), declareOptions(), and update().

Width of the up image.

Definition at line 75 of file RBMConv2DLLParameters.h.

Referenced by accumulateNegStats(), accumulatePosStats(), bpropUpdate(), build_(), declareOptions(), and update().

Element i contains the bias of up unit i.

Definition at line 89 of file RBMConv2DLLParameters.h.

Referenced by bpropUpdate(), build_(), declareOptions(), forget(), makeDeepCopyFromShallowCopy(), makeParametersPointHere(), nParameters(), and update().

Definition at line 120 of file RBMConv2DLLParameters.h.

Referenced by build_(), makeDeepCopyFromShallowCopy(), and update().

Accumulates negative contribution to the gradient of up_units_bias.

Definition at line 111 of file RBMConv2DLLParameters.h.

Referenced by accumulateNegStats(), build_(), clearStats(), makeDeepCopyFromShallowCopy(), and update().

Accumulates positive contribution to the gradient of up_units_bias.

Definition at line 109 of file RBMConv2DLLParameters.h.

Referenced by accumulatePosStats(), build_(), clearStats(), makeDeepCopyFromShallowCopy(), and update().

1.7.4

1.7.4