|

PLearn 0.1

|

|

PLearn 0.1

|

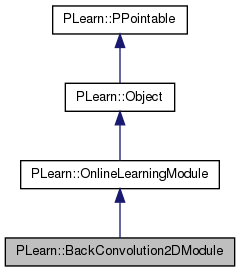

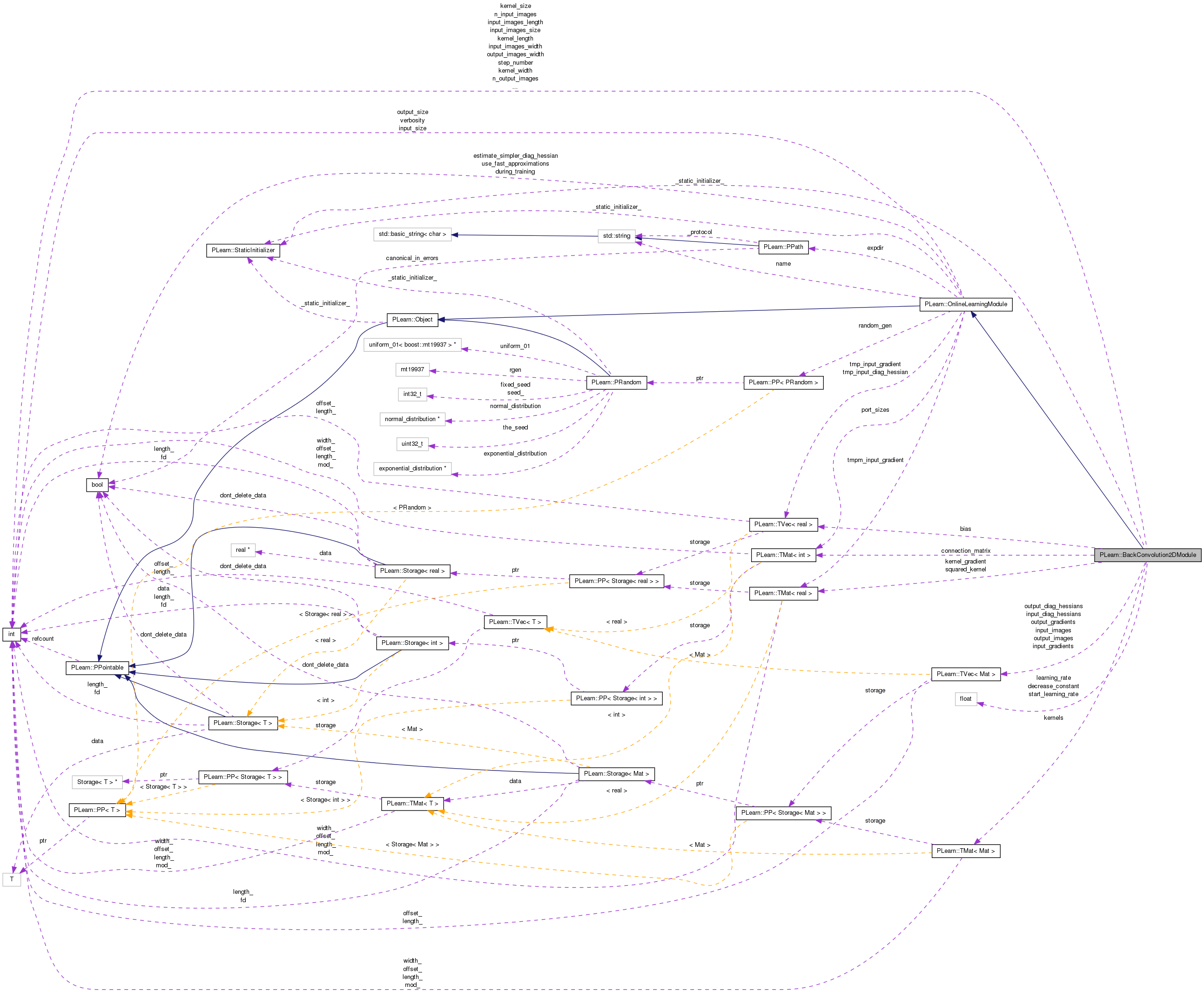

Transpose of Convolution2DModule. More...

#include <BackConvolution2DModule.h>

Public Member Functions | |

| BackConvolution2DModule () | |

| Default constructor. | |

| virtual void | fprop (const Vec &input, Vec &output) const |

| given the input, compute the output (possibly resize it appropriately) | |

| virtual void | bpropUpdate (const Vec &input, const Vec &output, Vec &input_gradient, const Vec &output_gradient, bool accumulate=false) |

| Adapt based on the output gradient: this method should only be called just after a corresponding fprop; it should be called with the same arguments as fprop for the first two arguments (and output should not have been modified since then). | |

| virtual void | bbpropUpdate (const Vec &input, const Vec &output, Vec &input_gradient, const Vec &output_gradient, Vec &input_diag_hessian, const Vec &output_diag_hessian, bool accumulate=false) |

| Similar to bpropUpdate, but adapt based also on the estimation of the diagonal of the Hessian matrix, and propagates this back. | |

| virtual void | forget () |

| reset the parameters to the state they would be BEFORE starting training. | |

| virtual string | classname () const |

| virtual OptionList & | getOptionList () const |

| virtual OptionMap & | getOptionMap () const |

| virtual RemoteMethodMap & | getRemoteMethodMap () const |

| virtual BackConvolution2DModule * | deepCopy (CopiesMap &copies) const |

| virtual void | build () |

| Post-constructor. | |

| virtual void | makeDeepCopyFromShallowCopy (CopiesMap &copies) |

| Transforms a shallow copy into a deep copy. | |

Static Public Member Functions | |

| static string | _classname_ () |

| optionally perform some processing after training, or after a series of fprop/bpropUpdate calls to prepare the model for truly out-of-sample operation. | |

| static OptionList & | _getOptionList_ () |

| static RemoteMethodMap & | _getRemoteMethodMap_ () |

| static Object * | _new_instance_for_typemap_ () |

| static bool | _isa_ (const Object *o) |

| static void | _static_initialize_ () |

| static const PPath & | declaringFile () |

Public Attributes | |

| int | n_input_images |

| ### declare public option fields (such as build options) here Start your comments with Doxygen-compatible comments such as //! | |

| int | input_images_length |

| Length of each of the input images. | |

| int | input_images_width |

| Width of each of the input images. | |

| int | n_output_images |

| Number of output images to put in the output vector. | |

| int | kernel_length |

| Length of each filter (or kernel) applied on an input image. | |

| int | kernel_width |

| Width of each filter (or kernel) applied on an input image. | |

| int | kernel_step1 |

| Horizontal step of the kernels. | |

| int | kernel_step2 |

| Vertical step of the kernels. | |

| TMat< int > | connection_matrix |

| Matrix of connections: it has n_input_images rows and n_output_images columns, each output image will only be connected to a subset of the input images, where a non-zero value is present in this matrix. | |

| real | start_learning_rate |

| Starting learning-rate, by which we multiply the gradient step. | |

| real | decrease_constant |

| learning_rate = start_learning_rate / (1 + decrease_constant*t), where t is the number of updates since the beginning | |

| TMat< Mat > | kernels |

| Contains the kernels between input and output images. | |

| Vec | bias |

| Contains the bias of the output images. | |

| int | output_images_length |

| Length of the output images. | |

| int | output_images_width |

| Width of the output images. | |

| int | input_images_size |

| Size of the input images (length * width) | |

| int | output_images_size |

| Size of the input images (length * width) | |

| int | kernel_size |

| Size of the input images (length * width) | |

Static Public Attributes | |

| static StaticInitializer | _static_initializer_ |

Static Protected Member Functions | |

| static void | declareOptions (OptionList &ol) |

| Declares the class options. | |

Private Types | |

| typedef OnlineLearningModule | inherited |

Private Member Functions | |

| void | build_ () |

| This does the actual building. | |

| void | build_kernels () |

| Build the kernels. | |

Private Attributes | |

| real | learning_rate |

| int | step_number |

| TVec< Mat > | input_images |

| TVec< Mat > | output_images |

| TVec< Mat > | input_gradients |

| TVec< Mat > | output_gradients |

| TVec< Mat > | input_diag_hessians |

| TVec< Mat > | output_diag_hessians |

| Mat | kernel_gradient |

| Mat | squared_kernel |

Transpose of Convolution2DModule.

Definition at line 51 of file BackConvolution2DModule.h.

typedef OnlineLearningModule PLearn::BackConvolution2DModule::inherited [private] |

Reimplemented from PLearn::OnlineLearningModule.

Definition at line 53 of file BackConvolution2DModule.h.

| PLearn::BackConvolution2DModule::BackConvolution2DModule | ( | ) |

Default constructor.

Definition at line 55 of file BackConvolution2DModule.cc.

:

n_input_images(1),

input_images_length(-1),

input_images_width(-1),

n_output_images(1),

kernel_length(-1),

kernel_width(-1),

kernel_step1(1),

kernel_step2(1),

start_learning_rate(0.),

decrease_constant(0.),

output_images_length(-1),

output_images_width(-1),

input_images_size(-1),

output_images_size(-1),

kernel_size(-1),

learning_rate(0.),

step_number(0)

{

}

| string PLearn::BackConvolution2DModule::_classname_ | ( | ) | [static] |

optionally perform some processing after training, or after a series of fprop/bpropUpdate calls to prepare the model for truly out-of-sample operation.

THE DEFAULT IMPLEMENTATION PROVIDED IN THE SUPER-CLASS DOES NOT DO ANYTHING. in case bpropUpdate does not do anything, make it known THE DEFAULT IMPLEMENTATION PROVIDED IN THE SUPER-CLASS RETURNS false;

Reimplemented from PLearn::OnlineLearningModule.

Definition at line 53 of file BackConvolution2DModule.cc.

| OptionList & PLearn::BackConvolution2DModule::_getOptionList_ | ( | ) | [static] |

Reimplemented from PLearn::OnlineLearningModule.

Definition at line 53 of file BackConvolution2DModule.cc.

| RemoteMethodMap & PLearn::BackConvolution2DModule::_getRemoteMethodMap_ | ( | ) | [static] |

Reimplemented from PLearn::OnlineLearningModule.

Definition at line 53 of file BackConvolution2DModule.cc.

Reimplemented from PLearn::OnlineLearningModule.

Definition at line 53 of file BackConvolution2DModule.cc.

| Object * PLearn::BackConvolution2DModule::_new_instance_for_typemap_ | ( | ) | [static] |

Reimplemented from PLearn::Object.

Definition at line 53 of file BackConvolution2DModule.cc.

| StaticInitializer BackConvolution2DModule::_static_initializer_ & PLearn::BackConvolution2DModule::_static_initialize_ | ( | ) | [static] |

Reimplemented from PLearn::OnlineLearningModule.

Definition at line 53 of file BackConvolution2DModule.cc.

| void PLearn::BackConvolution2DModule::bbpropUpdate | ( | const Vec & | input, |

| const Vec & | output, | ||

| Vec & | input_gradient, | ||

| const Vec & | output_gradient, | ||

| Vec & | input_diag_hessian, | ||

| const Vec & | output_diag_hessian, | ||

| bool | accumulate = false |

||

| ) | [virtual] |

Similar to bpropUpdate, but adapt based also on the estimation of the diagonal of the Hessian matrix, and propagates this back.

If these methods are defined, you can use them INSTEAD of bpropUpdate(...) N.B. A DEFAULT IMPLEMENTATION IS PROVIDED IN THE SUPER-CLASS, WHICH JUST CALLS bbpropUpdate(input, output, input_gradient, output_gradient, out_hess, in_hess) AND IGNORES INPUT HESSIAN AND INPUT GRADIENT. this version allows to obtain the input gradient and diag_hessian

If these methods are defined, you can use them INSTEAD of bpropUpdate(...)

Reimplemented from PLearn::OnlineLearningModule.

Definition at line 502 of file BackConvolution2DModule.cc.

References bpropUpdate(), connection_matrix, PLearn::convolve2D(), i, input_diag_hessians, input_images_length, input_images_size, input_images_width, PLearn::OnlineLearningModule::input_size, j, kernel_step1, kernel_step2, kernels, n_input_images, n_output_images, output_diag_hessians, output_images_length, output_images_size, output_images_width, PLearn::OnlineLearningModule::output_size, PLASSERT_MSG, PLERROR, PLearn::TVec< T >::resize(), PLearn::TVec< T >::size(), squared_kernel, PLearn::TVec< T >::subVec(), and PLearn::TVec< T >::toMat().

{

// This version forwards the second order information, but does not

// actually use it for the update.

// Check size

if( output_diag_hessian.size() != output_size )

PLERROR("BackConvolution2DModule::bbpropUpdate:"

" output_diag_hessian.size()\n"

"should be equal to output_size (%i != %i).\n",

output_diag_hessian.size(), output_size);

if( accumulate )

{

PLASSERT_MSG( input_diag_hessian.size() == input_size,

"Cannot resize input_diag_hessian AND accumulate into it"

);

}

else

input_diag_hessian.resize(input_size);

// Make input_diag_hessians and output_diag_hessians point to the right

// places

for( int i=0 ; i<n_input_images ; i++ )

input_diag_hessians[i] =

input_diag_hessian.subVec(i*input_images_size, input_images_size)

.toMat( input_images_length, input_images_width );

for( int j=0 ; j<n_output_images ; j++ )

output_diag_hessians[j] =

output_diag_hessian.subVec(j*output_images_size,output_images_size)

.toMat( output_images_length, output_images_width );

// Propagates to input_diag_hessian

for( int j=0 ; j<n_output_images ; j++ )

for( int i=0 ; j<n_input_images ; i++ )

if( connection_matrix(i,j) != 0 )

{

squared_kernel << kernels(i,j);

squared_kernel *= kernels(i,j); // term-to-term product

convolve2D( output_diag_hessians[j], squared_kernel,

input_diag_hessians[i],

kernel_step1, kernel_step2, accumulate );

}

// Call bpropUpdate()

bpropUpdate( input, output, input_gradient, output_gradient, accumulate );

}

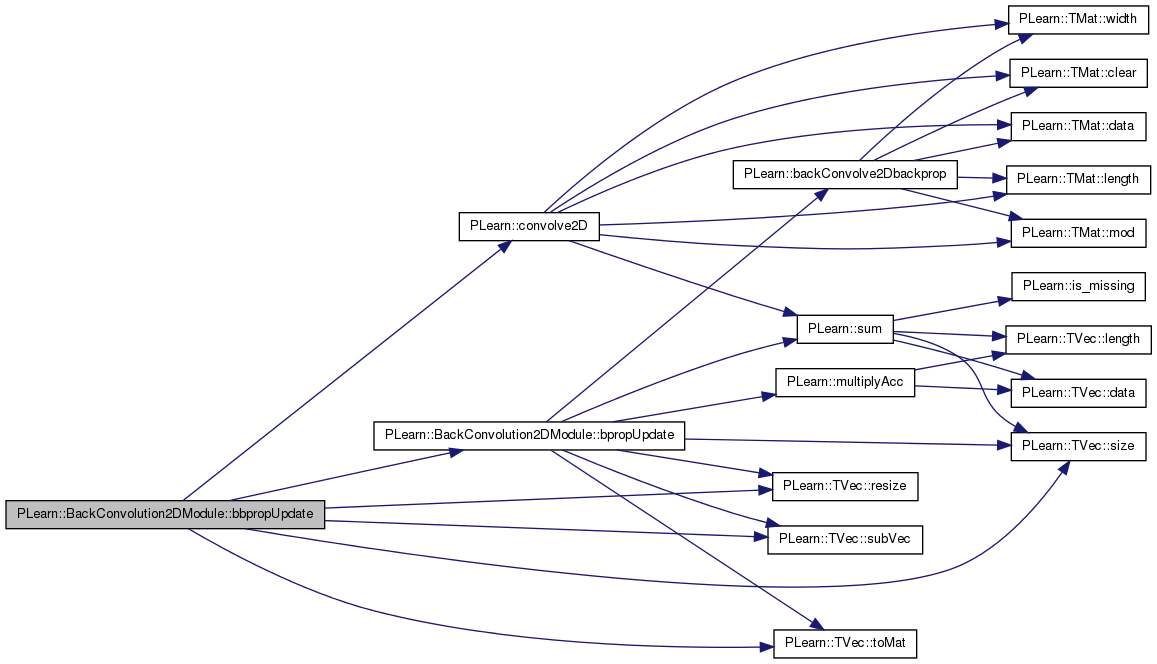

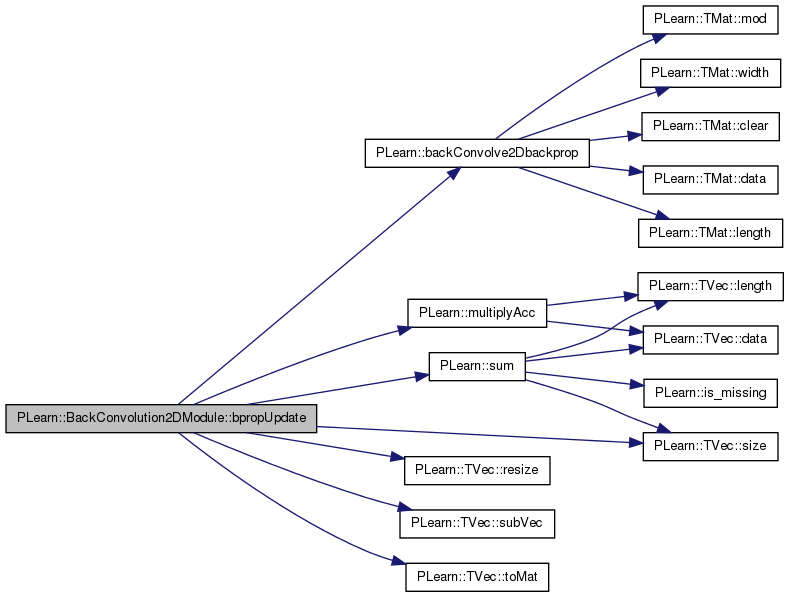

| void PLearn::BackConvolution2DModule::bpropUpdate | ( | const Vec & | input, |

| const Vec & | output, | ||

| Vec & | input_gradient, | ||

| const Vec & | output_gradient, | ||

| bool | accumulate = false |

||

| ) | [virtual] |

Adapt based on the output gradient: this method should only be called just after a corresponding fprop; it should be called with the same arguments as fprop for the first two arguments (and output should not have been modified since then).

this version allows to obtain the input gradient as well

Since sub-classes are supposed to learn ONLINE, the object is 'ready-to-be-used' just after any bpropUpdate. N.B. A DEFAULT IMPLEMENTATION IS PROVIDED IN THE SUPER-CLASS, WHICH JUST CALLS bpropUpdate(input, output, input_gradient, output_gradient) AND IGNORES INPUT GRADIENT. this version allows to obtain the input gradient as well N.B. THE DEFAULT IMPLEMENTATION IN SUPER-CLASS JUST RAISES A PLERROR. The flag indicates whether the input_gradients gets accumulated into or set with the computed derivatives.

Reimplemented from PLearn::OnlineLearningModule.

Definition at line 371 of file BackConvolution2DModule.cc.

References PLearn::backConvolve2Dbackprop(), bias, connection_matrix, decrease_constant, i, input_gradients, input_images, input_images_length, input_images_size, input_images_width, PLearn::OnlineLearningModule::input_size, j, kernel_gradient, kernel_step1, kernel_step2, kernels, learning_rate, PLearn::multiplyAcc(), n_input_images, n_output_images, output_gradients, output_images_length, output_images_size, output_images_width, PLearn::OnlineLearningModule::output_size, PLASSERT_MSG, PLERROR, PLearn::TVec< T >::resize(), PLearn::TVec< T >::size(), start_learning_rate, step_number, PLearn::TVec< T >::subVec(), PLearn::sum(), and PLearn::TVec< T >::toMat().

Referenced by bbpropUpdate().

{

// Check size

if( input.size() != input_size )

PLERROR("BackConvolution2DModule::bpropUpdate: input.size() should"

" be\n"

"equal to input_size (%i != %i).\n", input.size(), input_size);

if( output.size() != output_size )

PLERROR("BackConvolution2DModule::bpropUpdate: output.size() should"

" be\n"

"equal to output_size (%i != %i).\n",

output.size(), output_size);

if( output_gradient.size() != output_size )

PLERROR("BackConvolution2DModule::bpropUpdate: output_gradient.size()"

" should be\n"

"equal to output_size (%i != %i).\n",

output_gradient.size(), output_size);

if( accumulate )

{

PLASSERT_MSG( input_gradient.size() == input_size,

"Cannot resize input_gradient AND accumulate into it" );

}

else

input_gradient.resize(input_size);

// Since fprop() has just been called, we assume that input_images and

// output_images are up-to-date

// Make input_gradients and output_gradients point to the right places

for( int i=0 ; i<n_input_images ; i++ )

input_gradients[i] =

input_gradient.subVec(i*input_images_size, input_images_size)

.toMat( input_images_length, input_images_width );

for( int j=0 ; j<n_output_images ; j++ )

output_gradients[j] =

output_gradient.subVec(j*output_images_size, output_images_size)

.toMat( output_images_length, output_images_width );

// Do the actual bprop and update

learning_rate = start_learning_rate / (1+decrease_constant*step_number);

for( int j=0 ; j<n_output_images ; j++ )

{

for( int i=0 ; i<n_input_images ; j++ )

if( connection_matrix(i,j) != 0 )

{

backConvolve2Dbackprop( kernels(i,j), input_images[i],

input_gradients[i],

output_gradients[j], kernel_gradient,

kernel_step1, kernel_step2,

accumulate );

// kernel(i,j) -= learning_rate * kernel_gradient

multiplyAcc( kernels(i,j), kernel_gradient, -learning_rate );

}

bias[j] -= learning_rate * sum( output_gradients[j] );

}

}

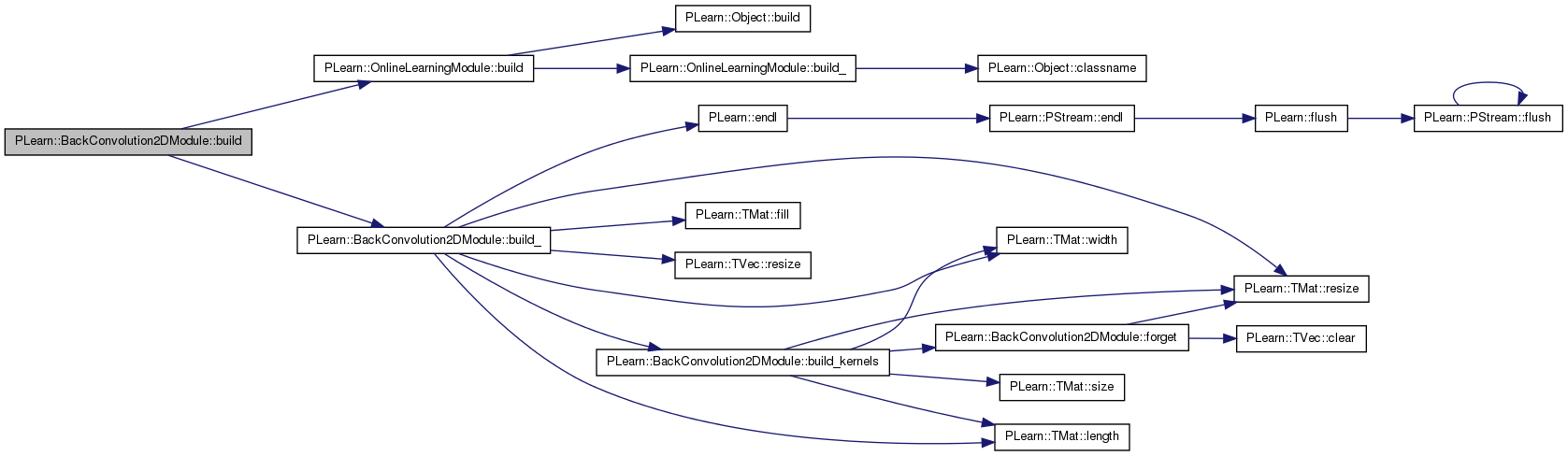

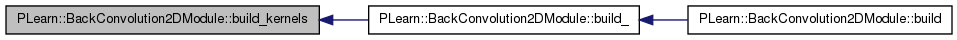

| void PLearn::BackConvolution2DModule::build | ( | ) | [virtual] |

Post-constructor.

The normal implementation should call simply inherited::build(), then this class's build_(). This method should be callable again at later times, after modifying some option fields to change the "architecture" of the object.

Reimplemented from PLearn::OnlineLearningModule.

Definition at line 294 of file BackConvolution2DModule.cc.

References PLearn::OnlineLearningModule::build(), and build_().

{

inherited::build();

build_();

}

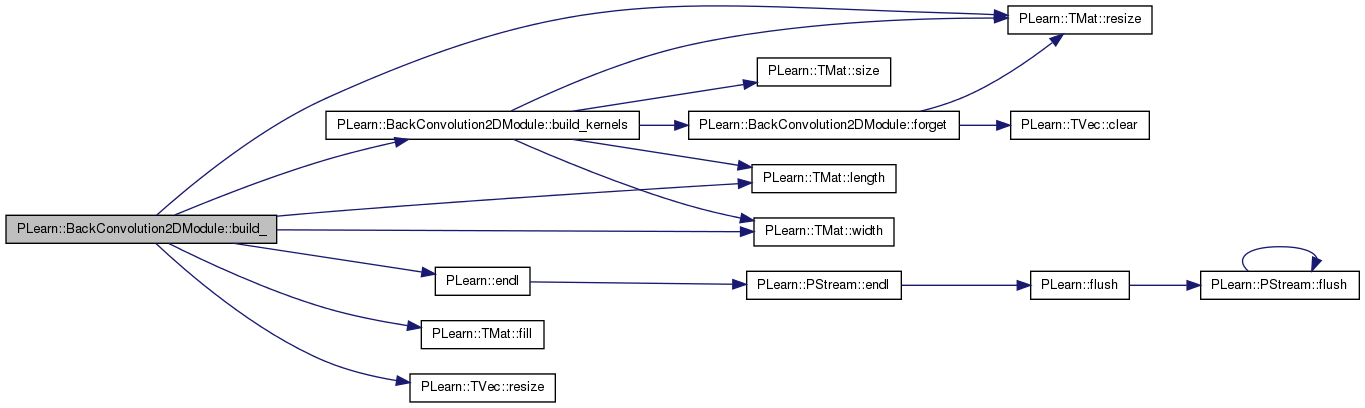

| void PLearn::BackConvolution2DModule::build_ | ( | ) | [private] |

This does the actual building.

Reimplemented from PLearn::OnlineLearningModule.

Definition at line 184 of file BackConvolution2DModule.cc.

References bias, build_kernels(), connection_matrix, PLearn::endl(), PLearn::TMat< T >::fill(), input_diag_hessians, input_gradients, input_images, input_images_length, input_images_size, input_images_width, PLearn::OnlineLearningModule::input_size, kernel_length, kernel_size, kernel_step1, kernel_step2, kernel_width, PLearn::TMat< T >::length(), n_input_images, n_output_images, output_diag_hessians, output_gradients, output_images, output_images_length, output_images_size, output_images_width, PLERROR, PLearn::TMat< T >::resize(), PLearn::TVec< T >::resize(), and PLearn::TMat< T >::width().

Referenced by build().

{

MODULE_LOG << "build_() called" << endl;

// Verify the parameters

if( n_input_images < 1 )

PLERROR("BackConvolution2DModule::build_: 'n_input_images'<1 (%i).\n",

n_input_images);

if( input_images_length < 0 )

PLERROR("BackConvolution2DModule::build_: 'input_images_length'<0"

" (%i).\n",

input_images_length);

if( input_images_width < 0 )

PLERROR("BackConvolution2DModule::build_: 'input_images_width'<0"

" (%i).\n",

input_images_width);

if( n_output_images < 1 )

PLERROR("BackConvolution2DModule::build_: 'n_output_images'<1 (%i).\n",

n_input_images);

if( kernel_length < 0 )

PLERROR("BackConvolution2DModule::build_: 'kernel_length'<0 (%i).\n",

kernel_length);

if( kernel_width < 0 )

PLERROR("BackConvolution2DModule::build_: 'kernel_width'<0 (%i).\n",

kernel_width);

if( kernel_step1 < 0 )

PLERROR("BackConvolution2DModule::build_: 'kernel_step1'<0 (%i).\n",

kernel_step1);

if( kernel_step2 < 0 )

PLERROR("BackConvolution2DModule::build_: 'kernel_step2'<0 (%i).\n",

kernel_step2);

// Build the learntoptions from the buildoptions

input_images_size = input_images_length * input_images_width;

input_size = n_input_images * input_size;

output_images_length = kernel_step1*(input_images_length-1)+kernel_length;

output_images_width = kernel_step2*(input_images_width - 1) + kernel_width;

output_images_size = output_images_length * output_images_width;

kernel_size = kernel_length * kernel_width;

bias.resize(n_output_images);

// If connection_matrix was not specified, or inconsistently,

// make it a matrix full of ones.

if( connection_matrix.length() != n_input_images

|| connection_matrix.width() != n_output_images )

{

connection_matrix.resize(n_input_images, n_output_images);

connection_matrix.fill(1);

}

build_kernels();

input_images.resize(n_input_images);

output_images.resize(n_output_images);

input_gradients.resize(n_input_images);

output_gradients.resize(n_output_images);

input_diag_hessians.resize(n_input_images);

output_diag_hessians.resize(n_output_images);

}

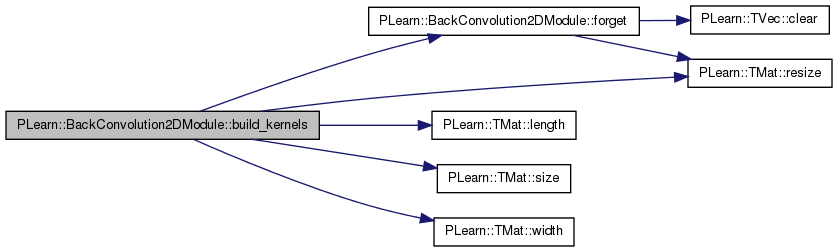

| void PLearn::BackConvolution2DModule::build_kernels | ( | ) | [private] |

Build the kernels.

Definition at line 254 of file BackConvolution2DModule.cc.

References connection_matrix, forget(), i, j, kernel_gradient, kernel_length, kernel_width, kernels, PLearn::TMat< T >::length(), n_input_images, n_output_images, PLearn::TMat< T >::resize(), PLearn::TMat< T >::size(), squared_kernel, and PLearn::TMat< T >::width().

Referenced by build_().

{

// If kernels has the right size, for all i and j kernel(i,j) exists iff

// connection_matrix(i,j) !=0, and has the appropriate size, then we don't

// want to forget them.

bool need_rebuild = false;

if( kernels.length() != n_input_images

|| kernels.width() != n_output_images )

{

need_rebuild = true;

}

else

{

for( int i=0 ; i<n_input_images ; i++ )

for( int j=0 ; j<n_output_images ; j++ )

{

if( connection_matrix(i,j) == 0 )

{

if( kernels(i,j).size() != 0 )

{

need_rebuild = true;

break;

}

}

else if( kernels(i,j).length() != kernel_length

|| kernels(i,j).width() != kernel_width )

{

need_rebuild = true;

break;

}

}

}

if( need_rebuild )

forget();

kernel_gradient.resize(kernel_length, kernel_width);

squared_kernel.resize(kernel_length, kernel_width);

}

| string PLearn::BackConvolution2DModule::classname | ( | ) | const [virtual] |

Reimplemented from PLearn::Object.

Definition at line 53 of file BackConvolution2DModule.cc.

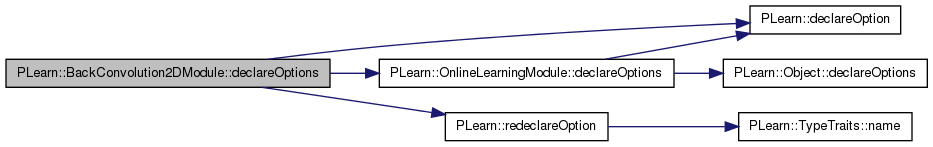

| void PLearn::BackConvolution2DModule::declareOptions | ( | OptionList & | ol | ) | [static, protected] |

Declares the class options.

Reimplemented from PLearn::OnlineLearningModule.

Definition at line 76 of file BackConvolution2DModule.cc.

References bias, PLearn::OptionBase::buildoption, connection_matrix, PLearn::declareOption(), PLearn::OnlineLearningModule::declareOptions(), decrease_constant, input_images_length, input_images_width, PLearn::OnlineLearningModule::input_size, kernel_length, kernel_step1, kernel_step2, kernel_width, kernels, PLearn::OptionBase::learntoption, n_input_images, n_output_images, output_images_length, output_images_width, PLearn::OnlineLearningModule::output_size, PLearn::redeclareOption(), and start_learning_rate.

{

// declareOption(ol, "myoption", &BackConvolution2DModule::myoption,

// OptionBase::buildoption,

// "Help text describing this option");

declareOption(ol, "n_input_images",

&BackConvolution2DModule::n_input_images,

OptionBase::buildoption,

"Number of input images present at the same time in the"

" input vector");

declareOption(ol, "input_images_length",

&BackConvolution2DModule::input_images_length,

OptionBase::buildoption,

"Length of each of the input images");

declareOption(ol, "input_images_width",

&BackConvolution2DModule::input_images_width,

OptionBase::buildoption,

"Width of each of the input images");

declareOption(ol, "n_output_images",

&BackConvolution2DModule::n_output_images,

OptionBase::buildoption,

"Number of output images to put in the output vector");

declareOption(ol, "kernel_length",

&BackConvolution2DModule::kernel_length,

OptionBase::buildoption,

"Length of each filter (or kernel) applied on an input image"

);

declareOption(ol, "kernel_width", &BackConvolution2DModule::kernel_width,

OptionBase::buildoption,

"Width of each filter (or kernel) applied on an input image"

);

declareOption(ol, "kernel_step1", &BackConvolution2DModule::kernel_step1,

OptionBase::buildoption,

"Horizontal step of the kernels");

declareOption(ol, "kernel_step2", &BackConvolution2DModule::kernel_step2,

OptionBase::buildoption,

"Vertical step of the kernels");

declareOption(ol, "connection_matrix",

&BackConvolution2DModule::connection_matrix,

OptionBase::buildoption,

"Matrix of connections:\n"

"it has n_input_images rows and n_output_images columns,\n"

"each output image will only be connected to a subset of"

" the\n"

"input images, where a non-zero value is present in this"

" matrix.\n"

"If this matrix is not provided, it will be fully"

" connected.\n"

);

declareOption(ol, "start_learning_rate",

&BackConvolution2DModule::start_learning_rate,

OptionBase::buildoption,

"Starting learning-rate, by which we multiply the gradient"

" step"

);

declareOption(ol, "decrease_constant",

&BackConvolution2DModule::decrease_constant,

OptionBase::buildoption,

"learning_rate = start_learning_rate / (1 +"

" decrease_constant*t),\n"

"where t is the number of updates since the beginning\n"

);

declareOption(ol, "output_images_length",

&BackConvolution2DModule::output_images_length,

OptionBase::learntoption,

"Length of the output images");

declareOption(ol, "output_images_width",

&BackConvolution2DModule::output_images_width,

OptionBase::learntoption,

"Width of the output images");

declareOption(ol, "kernels", &BackConvolution2DModule::kernels,

OptionBase::learntoption,

"Contains the kernels between input and output images");

declareOption(ol, "bias", &BackConvolution2DModule::bias,

OptionBase::learntoption,

"Contains the bias of the output images");

// Now call the parent class' declareOptions

inherited::declareOptions(ol);

// Redeclare some of the parent's options as learntoptions

redeclareOption(ol, "input_size", &BackConvolution2DModule::input_size,

OptionBase::learntoption,

"Size of the input, computed from n_input_images,\n"

"n_input_length and n_input_width.\n");

redeclareOption(ol, "output_size", &BackConvolution2DModule::output_size,

OptionBase::learntoption,

"Size of the output, computed from n_output_images,\n"

"n_output_length and n_output_width.\n");

}

| static const PPath& PLearn::BackConvolution2DModule::declaringFile | ( | ) | [inline, static] |

Reimplemented from PLearn::OnlineLearningModule.

Definition at line 201 of file BackConvolution2DModule.h.

:

//##### Protected Member Functions ######################################

| BackConvolution2DModule * PLearn::BackConvolution2DModule::deepCopy | ( | CopiesMap & | copies | ) | const [virtual] |

Reimplemented from PLearn::OnlineLearningModule.

Definition at line 53 of file BackConvolution2DModule.cc.

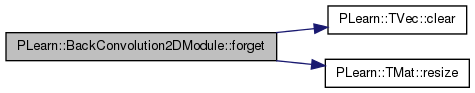

| void PLearn::BackConvolution2DModule::forget | ( | ) | [virtual] |

reset the parameters to the state they would be BEFORE starting training.

Note that this method is necessarily called from build().

Implements PLearn::OnlineLearningModule.

Definition at line 436 of file BackConvolution2DModule.cc.

References bias, PLearn::TVec< T >::clear(), connection_matrix, i, j, kernel_length, kernel_width, kernels, n_input_images, n_output_images, PLWARNING, PLearn::OnlineLearningModule::random_gen, and PLearn::TMat< T >::resize().

Referenced by build_kernels().

{

bias.clear();

if( !random_gen )

{

PLWARNING( "BackConvolution2DModule: cannot forget() without"

" random_gen" );

return;

}

real scale_factor = 1./(kernel_length*kernel_width);

kernels.resize( n_input_images, n_output_images );

for( int i=0 ; i<n_input_images ; i++ )

for( int j=0 ; j<n_output_images ; j++ )

{

if( connection_matrix(i,j) == 0 )

kernels(i,j).resize(0,0);

else

{

kernels(i,j).resize(kernel_length, kernel_width);

random_gen->fill_random_uniform( kernels(i,j),

-scale_factor,

scale_factor );

}

}

}

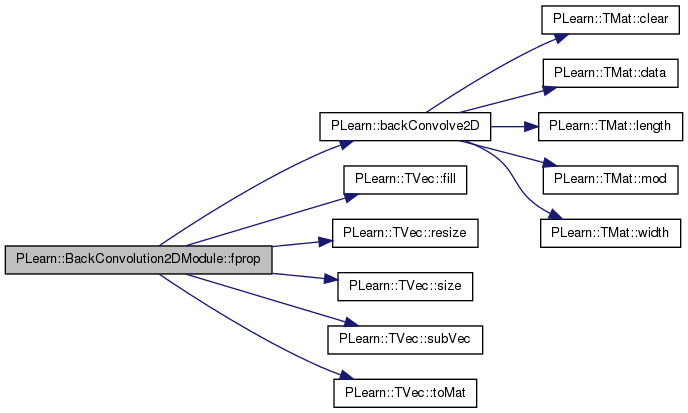

given the input, compute the output (possibly resize it appropriately)

Reimplemented from PLearn::OnlineLearningModule.

Definition at line 320 of file BackConvolution2DModule.cc.

References PLearn::backConvolve2D(), bias, connection_matrix, PLearn::TVec< T >::fill(), i, input_images, input_images_length, input_images_size, input_images_width, PLearn::OnlineLearningModule::input_size, j, kernel_step1, kernel_step2, kernels, n_input_images, n_output_images, output_images, output_images_length, output_images_size, output_images_width, PLearn::OnlineLearningModule::output_size, PLERROR, PLearn::TVec< T >::resize(), PLearn::TVec< T >::size(), PLearn::TVec< T >::subVec(), and PLearn::TVec< T >::toMat().

{

// Check size

if( input.size() != input_size )

PLERROR("BackConvolution2DModule::fprop: input.size() should be equal"

" to\n"

"input_size (%i != %i).\n", input.size(), input_size);

output.resize(output_size);

// Make input_images and output_images point to the right places

for( int i=0 ; i<n_input_images ; i++ )

input_images[i] =

input.subVec(i*input_images_size, input_images_size)

.toMat( input_images_length, input_images_width );

for( int j=0 ; j<n_output_images ; j++ )

output_images[j] =

output.subVec(j*output_images_size, output_images_size)

.toMat( output_images_length, output_images_width );

// Compute the values of the output_images

for( int j=0 ; j<n_output_images ; j++ )

{

output_images[j].fill( bias[j] );

for( int i=0 ; i<n_input_images ; i++ )

{

if( connection_matrix(i,j) != 0 )

backConvolve2D(input_images[i], kernels(i,j), output_images[j],

kernel_step1, kernel_step2, true );

}

}

}

| OptionList & PLearn::BackConvolution2DModule::getOptionList | ( | ) | const [virtual] |

Reimplemented from PLearn::Object.

Definition at line 53 of file BackConvolution2DModule.cc.

| OptionMap & PLearn::BackConvolution2DModule::getOptionMap | ( | ) | const [virtual] |

Reimplemented from PLearn::Object.

Definition at line 53 of file BackConvolution2DModule.cc.

| RemoteMethodMap & PLearn::BackConvolution2DModule::getRemoteMethodMap | ( | ) | const [virtual] |

Reimplemented from PLearn::Object.

Definition at line 53 of file BackConvolution2DModule.cc.

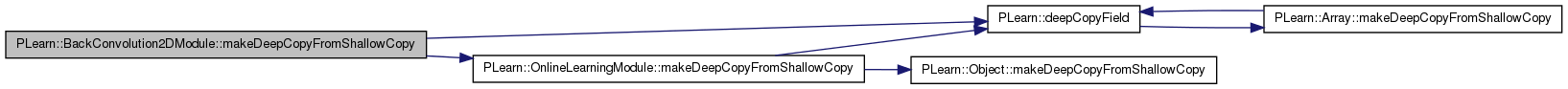

| void PLearn::BackConvolution2DModule::makeDeepCopyFromShallowCopy | ( | CopiesMap & | copies | ) | [virtual] |

Transforms a shallow copy into a deep copy.

Reimplemented from PLearn::OnlineLearningModule.

Definition at line 301 of file BackConvolution2DModule.cc.

References bias, connection_matrix, PLearn::deepCopyField(), input_diag_hessians, input_gradients, input_images, kernel_gradient, kernels, PLearn::OnlineLearningModule::makeDeepCopyFromShallowCopy(), output_diag_hessians, output_gradients, output_images, and squared_kernel.

{

inherited::makeDeepCopyFromShallowCopy(copies);

deepCopyField(connection_matrix, copies);

deepCopyField(kernels, copies);

deepCopyField(bias, copies);

deepCopyField(input_images, copies);

deepCopyField(output_images, copies);

deepCopyField(input_gradients, copies);

deepCopyField(output_gradients, copies);

deepCopyField(input_diag_hessians, copies);

deepCopyField(output_diag_hessians, copies);

deepCopyField(kernel_gradient, copies);

deepCopyField(squared_kernel, copies);

}

Reimplemented from PLearn::OnlineLearningModule.

Definition at line 201 of file BackConvolution2DModule.h.

Contains the bias of the output images.

Definition at line 106 of file BackConvolution2DModule.h.

Referenced by bpropUpdate(), build_(), declareOptions(), forget(), fprop(), and makeDeepCopyFromShallowCopy().

Matrix of connections: it has n_input_images rows and n_output_images columns, each output image will only be connected to a subset of the input images, where a non-zero value is present in this matrix.

If this matrix is not provided, it will be fully connected.

Definition at line 90 of file BackConvolution2DModule.h.

Referenced by bbpropUpdate(), bpropUpdate(), build_(), build_kernels(), declareOptions(), forget(), fprop(), and makeDeepCopyFromShallowCopy().

learning_rate = start_learning_rate / (1 + decrease_constant*t), where t is the number of updates since the beginning

Definition at line 97 of file BackConvolution2DModule.h.

Referenced by bpropUpdate(), and declareOptions().

Definition at line 238 of file BackConvolution2DModule.h.

Referenced by bbpropUpdate(), build_(), and makeDeepCopyFromShallowCopy().

Definition at line 236 of file BackConvolution2DModule.h.

Referenced by bpropUpdate(), build_(), and makeDeepCopyFromShallowCopy().

TVec<Mat> PLearn::BackConvolution2DModule::input_images [private] |

Definition at line 234 of file BackConvolution2DModule.h.

Referenced by bpropUpdate(), build_(), fprop(), and makeDeepCopyFromShallowCopy().

Length of each of the input images.

Definition at line 65 of file BackConvolution2DModule.h.

Referenced by bbpropUpdate(), bpropUpdate(), build_(), declareOptions(), and fprop().

Size of the input images (length * width)

Definition at line 116 of file BackConvolution2DModule.h.

Referenced by bbpropUpdate(), bpropUpdate(), build_(), and fprop().

Width of each of the input images.

Definition at line 68 of file BackConvolution2DModule.h.

Referenced by bbpropUpdate(), bpropUpdate(), build_(), declareOptions(), and fprop().

Mat PLearn::BackConvolution2DModule::kernel_gradient [mutable, private] |

Definition at line 242 of file BackConvolution2DModule.h.

Referenced by bpropUpdate(), build_kernels(), and makeDeepCopyFromShallowCopy().

Length of each filter (or kernel) applied on an input image.

Definition at line 74 of file BackConvolution2DModule.h.

Referenced by build_(), build_kernels(), declareOptions(), and forget().

Size of the input images (length * width)

Definition at line 122 of file BackConvolution2DModule.h.

Referenced by build_().

Horizontal step of the kernels.

Definition at line 80 of file BackConvolution2DModule.h.

Referenced by bbpropUpdate(), bpropUpdate(), build_(), declareOptions(), and fprop().

Vertical step of the kernels.

Definition at line 83 of file BackConvolution2DModule.h.

Referenced by bbpropUpdate(), bpropUpdate(), build_(), declareOptions(), and fprop().

Width of each filter (or kernel) applied on an input image.

Definition at line 77 of file BackConvolution2DModule.h.

Referenced by build_(), build_kernels(), declareOptions(), and forget().

Contains the kernels between input and output images.

Definition at line 103 of file BackConvolution2DModule.h.

Referenced by bbpropUpdate(), bpropUpdate(), build_kernels(), declareOptions(), forget(), fprop(), and makeDeepCopyFromShallowCopy().

Definition at line 229 of file BackConvolution2DModule.h.

Referenced by bpropUpdate().

### declare public option fields (such as build options) here Start your comments with Doxygen-compatible comments such as //!

Number of input images present at the same time in the input vector

Definition at line 62 of file BackConvolution2DModule.h.

Referenced by bbpropUpdate(), bpropUpdate(), build_(), build_kernels(), declareOptions(), forget(), and fprop().

Number of output images to put in the output vector.

Definition at line 71 of file BackConvolution2DModule.h.

Referenced by bbpropUpdate(), bpropUpdate(), build_(), build_kernels(), declareOptions(), forget(), and fprop().

Definition at line 239 of file BackConvolution2DModule.h.

Referenced by bbpropUpdate(), build_(), and makeDeepCopyFromShallowCopy().

Definition at line 237 of file BackConvolution2DModule.h.

Referenced by bpropUpdate(), build_(), and makeDeepCopyFromShallowCopy().

Definition at line 235 of file BackConvolution2DModule.h.

Referenced by build_(), fprop(), and makeDeepCopyFromShallowCopy().

Length of the output images.

Definition at line 110 of file BackConvolution2DModule.h.

Referenced by bbpropUpdate(), bpropUpdate(), build_(), declareOptions(), and fprop().

Size of the input images (length * width)

Definition at line 119 of file BackConvolution2DModule.h.

Referenced by bbpropUpdate(), bpropUpdate(), build_(), and fprop().

Width of the output images.

Definition at line 113 of file BackConvolution2DModule.h.

Referenced by bbpropUpdate(), bpropUpdate(), build_(), declareOptions(), and fprop().

Mat PLearn::BackConvolution2DModule::squared_kernel [mutable, private] |

Definition at line 244 of file BackConvolution2DModule.h.

Referenced by bbpropUpdate(), build_kernels(), and makeDeepCopyFromShallowCopy().

Starting learning-rate, by which we multiply the gradient step.

Definition at line 93 of file BackConvolution2DModule.h.

Referenced by bpropUpdate(), and declareOptions().

Definition at line 230 of file BackConvolution2DModule.h.

Referenced by bpropUpdate().

1.7.4

1.7.4